# AgentField — Complete Documentation Corpus

# Contract-Version: 2026-03-24-v1

# Docs-Revision: b581ec4ced1c

# Generated-At: 2026-03-24T17:32:27.000Z

# Primary JSON manifest: https://agentfield.ai/docs-ai.json

This file is the machine-readable documentation corpus for AgentField. Code blocks are included only for pages that were explicitly vetted against the current source of truth; all other pages omit fenced examples to reduce drift. Prefer the JSON manifest when you need one-shot discovery, and prefer per-page markdown endpoints when you need a single focused page.

## Agents

URL: https://agentfield.ai/docs/build/building-blocks/agents

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/agents

Last-Modified: 2026-03-24T17:04:50.000Z

Category: building-blocks

Difficulty: beginner

Keywords: agent, container, serve, run, lifecycle, constructor, config

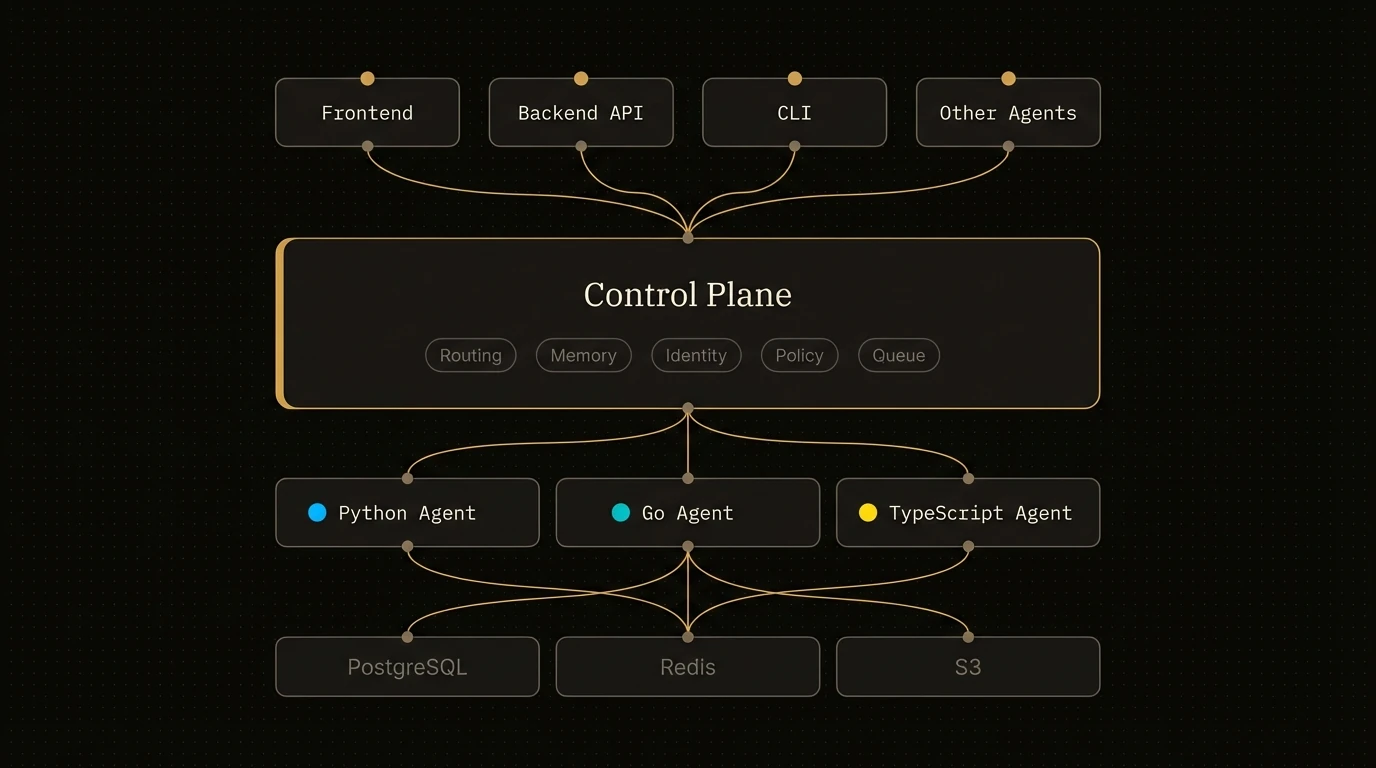

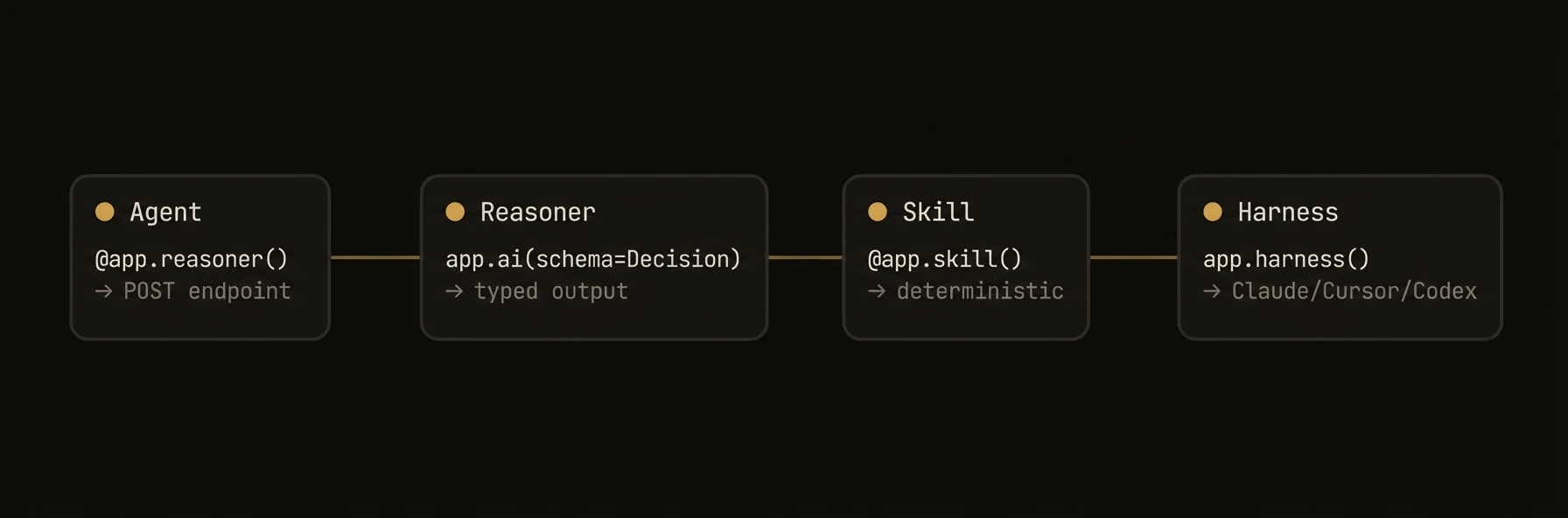

Summary: The core container that hosts reasoners, skills, and connects to the AgentField control plane

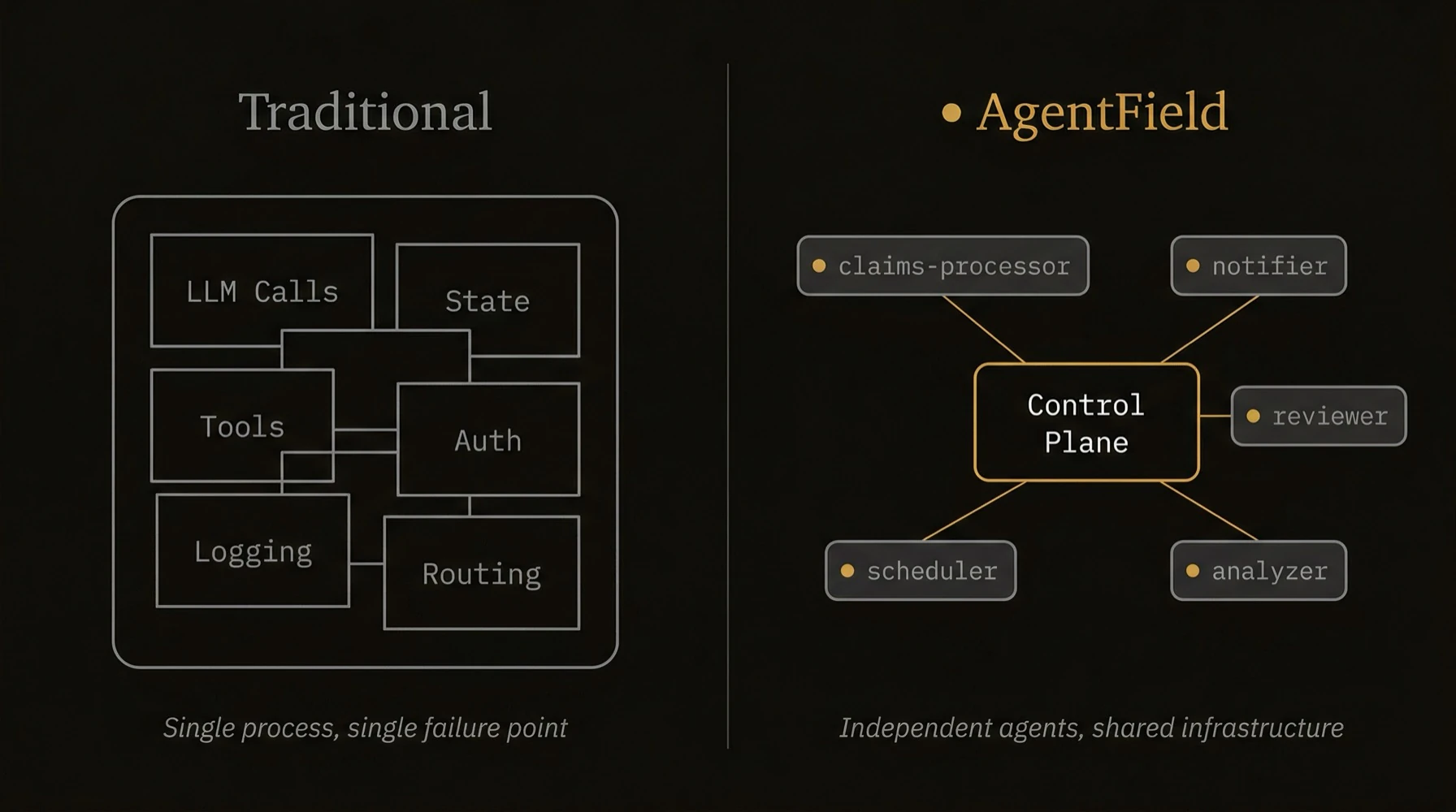

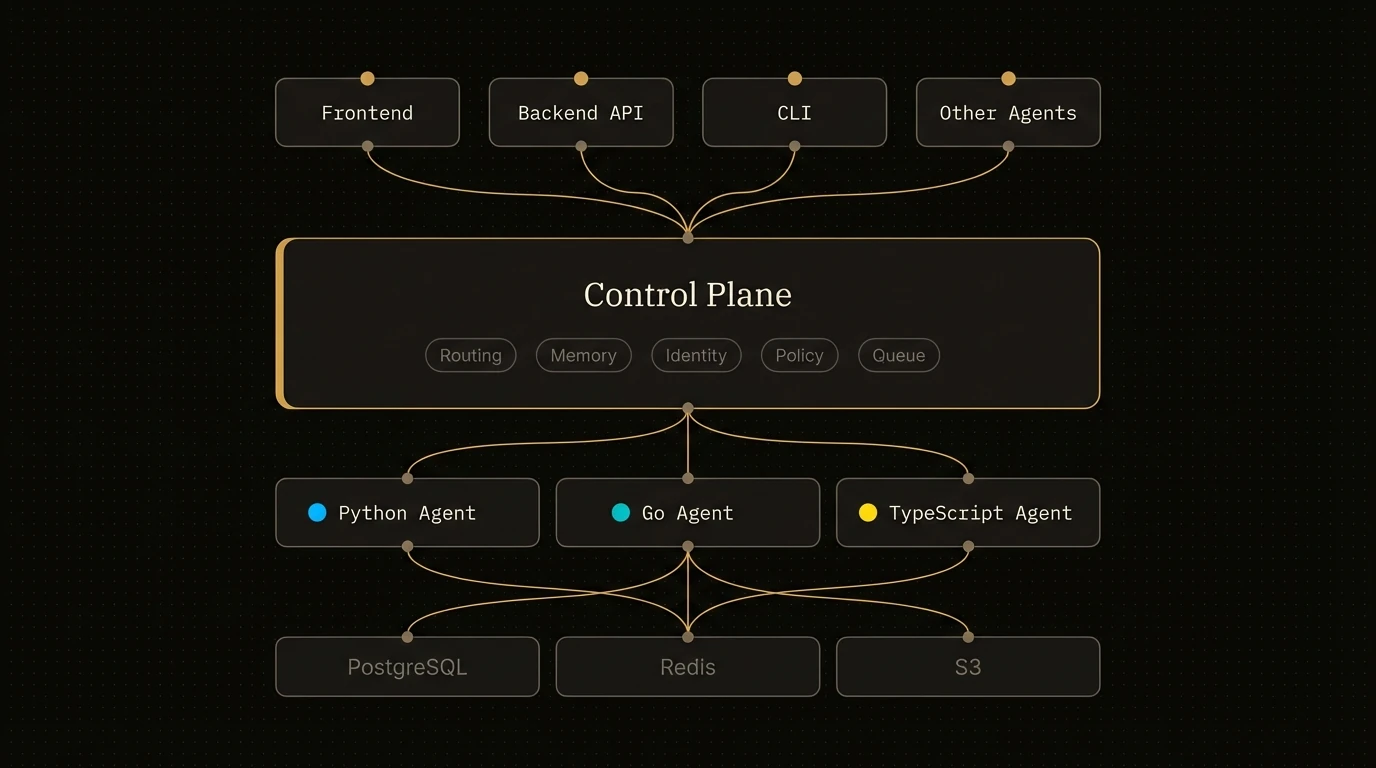

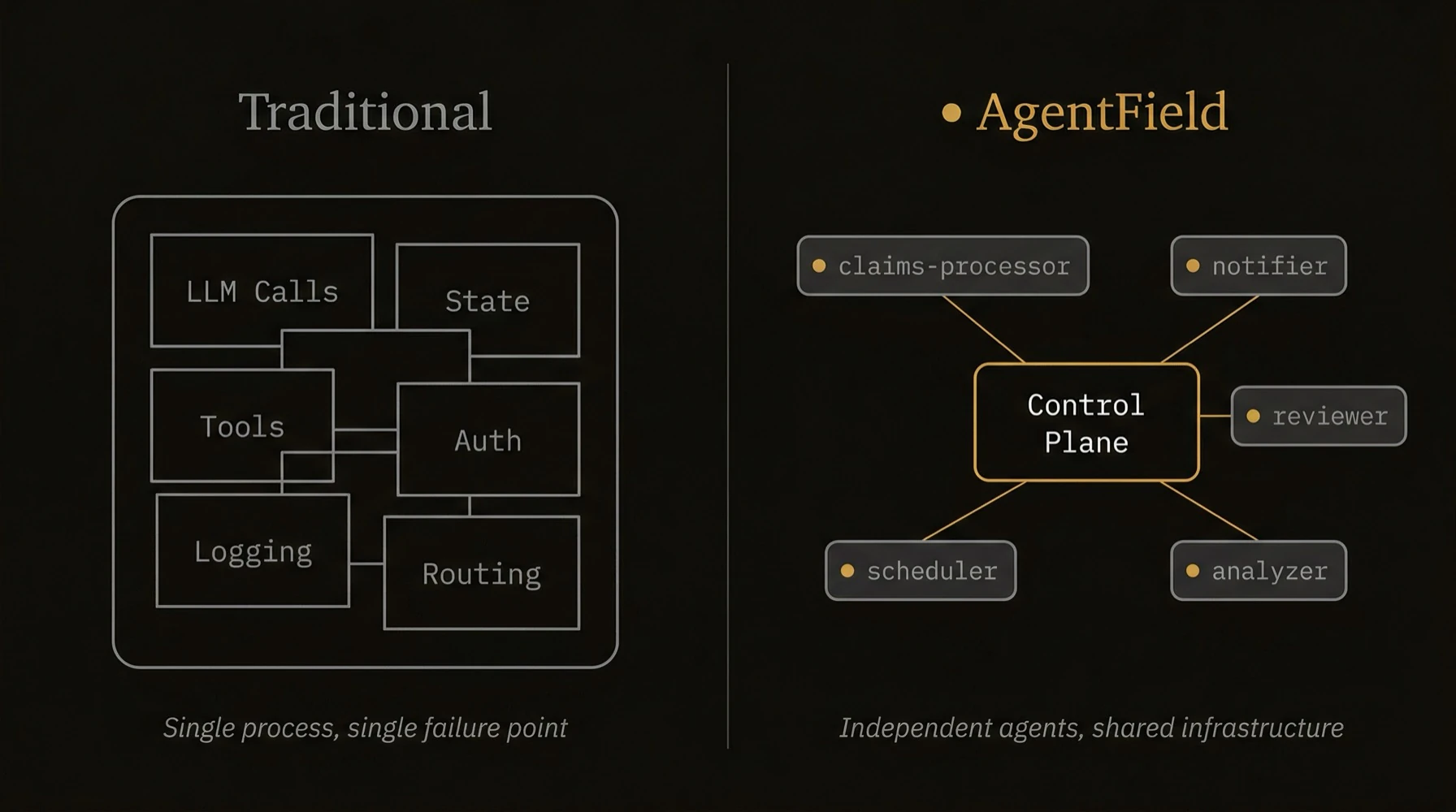

The top-level container that turns your code into a discoverable, governed, production microservice.

Without `Agent`, you would wire an HTTP server, registration, routing, identity, tracing, memory access, and cross-agent calls separately. With `Agent`, that infrastructure boundary is the object you instantiate.

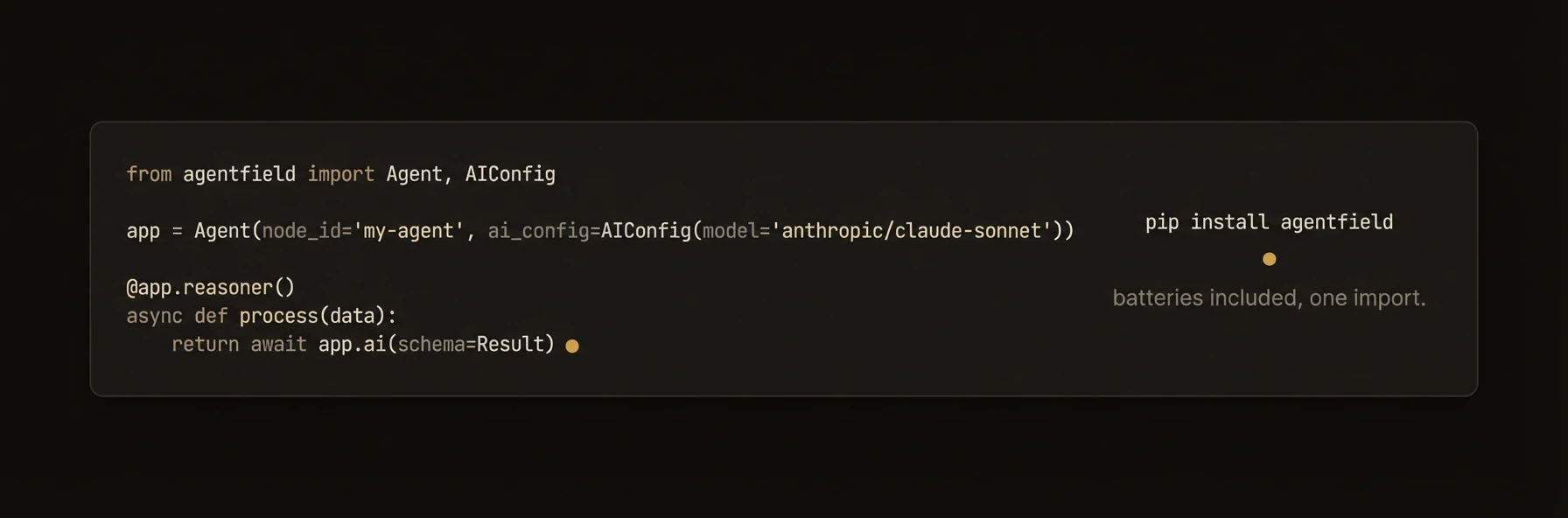

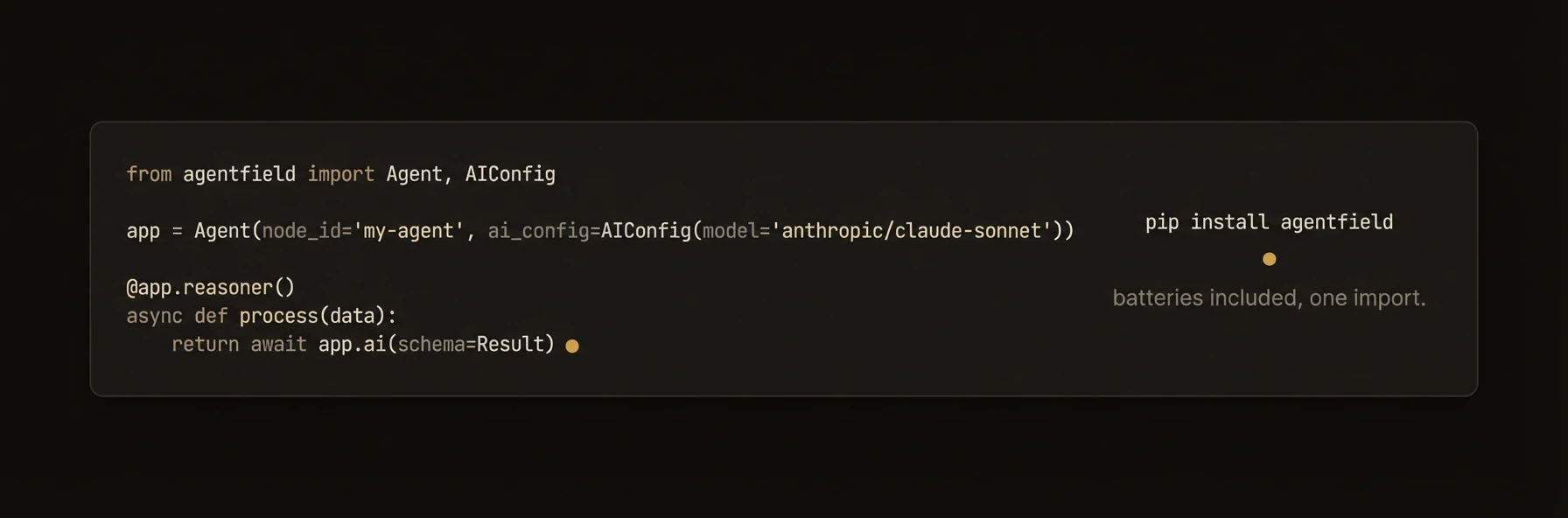

### Python

```python

from agentfield import Agent, AIConfig

from pydantic import BaseModel

app = Agent(

node_id="support-triage", # unique ID in the network

ai_config=AIConfig(model="anthropic/claude-sonnet-4-20250514"),

)

class TicketClassification(BaseModel):

priority: str # "critical" | "high" | "normal" | "low"

department: str # route to the right team

summary: str # one-line summary for the queue

@app.reasoner() # AI-powered — gets an LLM client automatically

async def classify_ticket(subject: str, body: str, customer_id: str) -> TicketClassification:

result = await app.ai(

system="You triage customer support tickets.",

user=f"Subject: {subject}\n\n{body}",

schema=TicketClassification, # validated, typed output

)

await app.memory.set(f"ticket:{customer_id}:last_priority", result.priority)

return result

@app.skill() # deterministic — no AI, just business logic

def escalation_policy(priority: str) -> dict:

sla = {"critical": 15, "high": 60, "normal": 240, "low": 1440}

return {"sla_minutes": sla.get(priority, 240)}

app.run() # starts HTTP server + registers with control plane

# POST /reasoners/classify_ticket → AI classification

# POST /skills/escalation_policy → SLA lookup

```

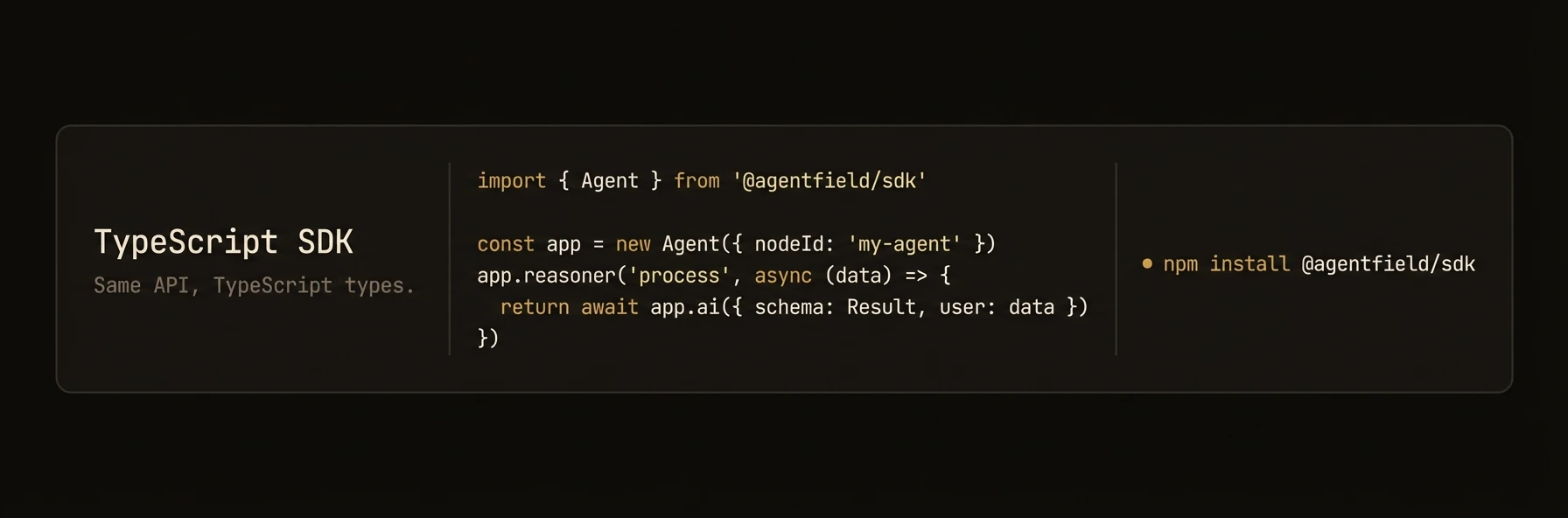

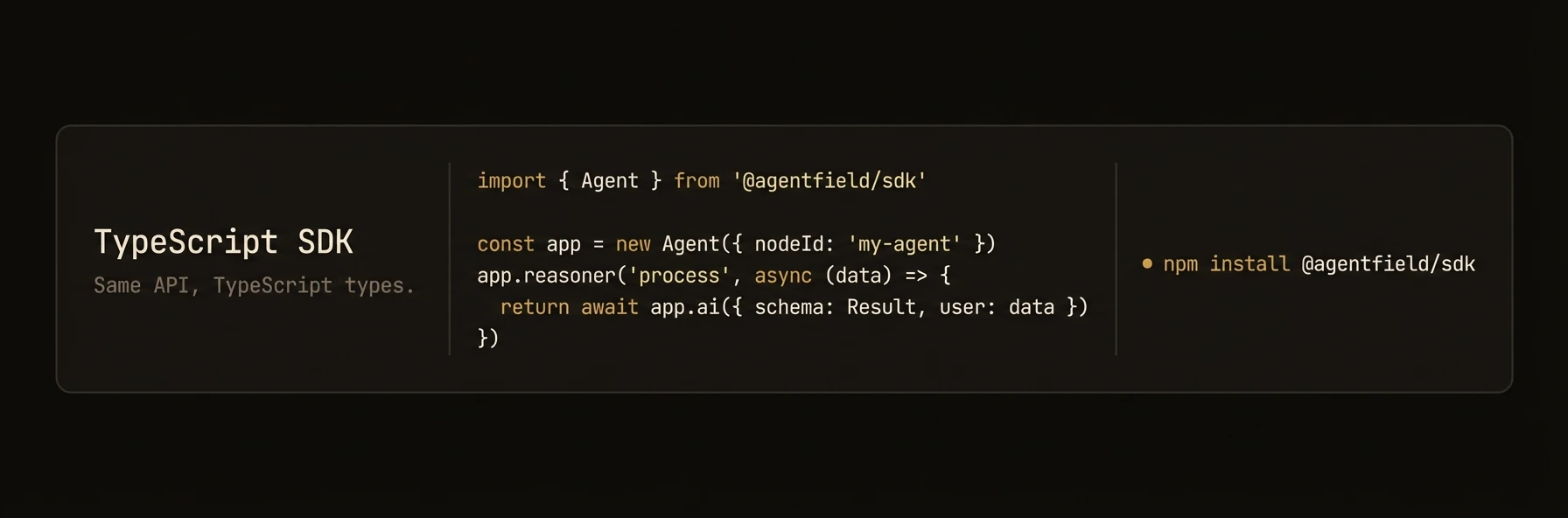

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

import { z } from 'zod';

const agent = new Agent({

nodeId: 'support-triage', // unique ID in the network

aiConfig: { provider: 'anthropic', model: 'claude-sonnet-4-20250514' },

});

const TicketClassification = z.object({

priority: z.enum(['critical', 'high', 'normal', 'low']),

department: z.string(), // route to the right team

summary: z.string(), // one-line summary for the queue

});

agent.reasoner('classifyTicket', async (ctx) => { // AI-powered

const result = await ctx.ai(

`Subject: ${ctx.input.subject}\n\n${ctx.input.body}`,

{

system: 'You triage customer support tickets.',

schema: TicketClassification, // validated, typed output

},

);

await ctx.memory.set(`ticket:${ctx.input.customerId}:lastPriority`, result.priority);

return result;

});

agent.skill('escalationPolicy', (ctx) => { // deterministic — no AI

const sla: Record = { critical: 15, high: 60, normal: 240, low: 1440 };

return { slaMinutes: sla[ctx.input.priority] ?? 240 };

});

agent.serve(); // starts HTTP server + registers with control plane

```

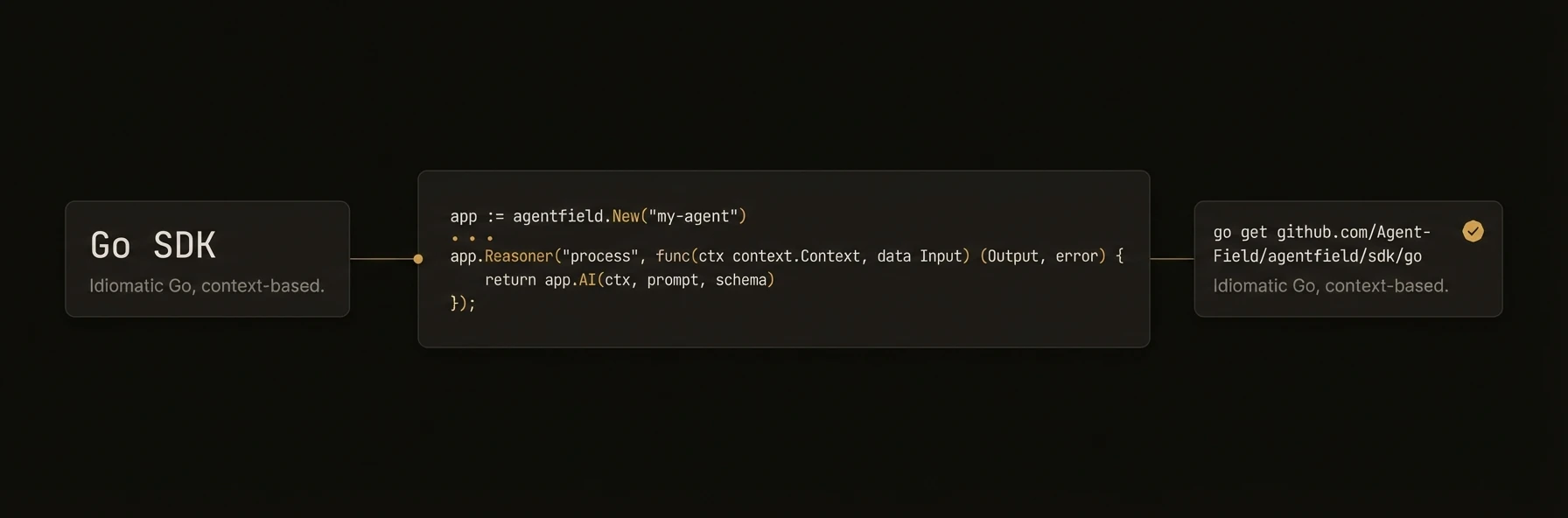

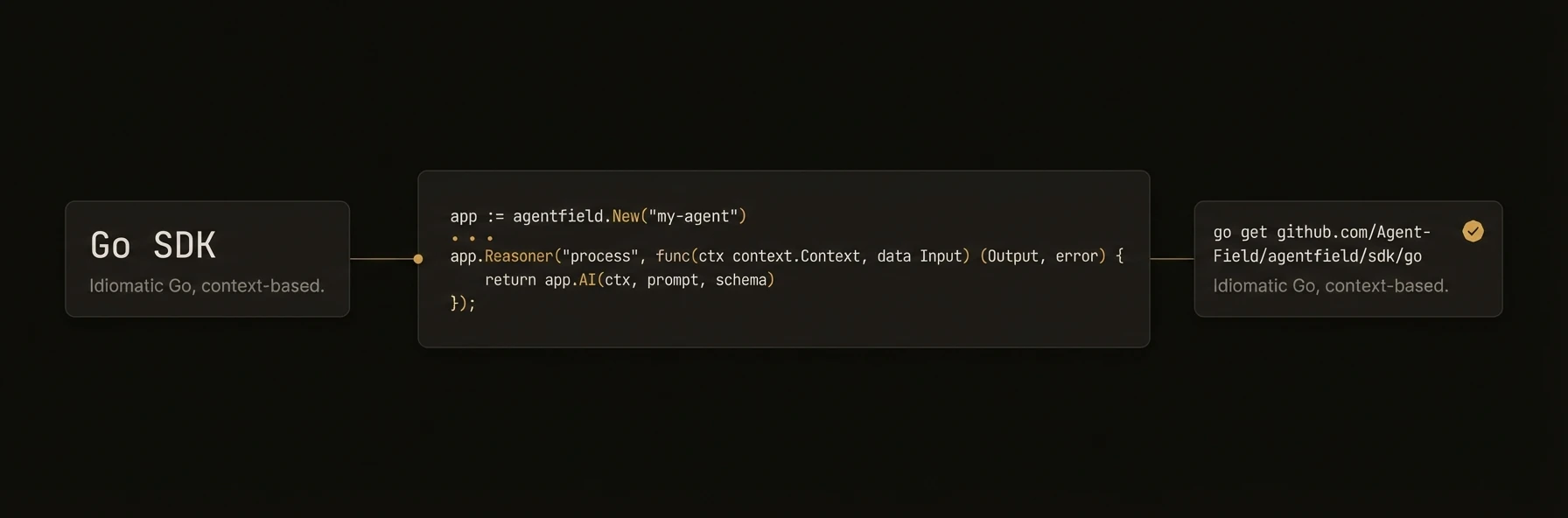

### Go

```go

package main

import (

"context"

"log"

"github.com/Agent-Field/agentfield/sdk/go/agent"

"github.com/Agent-Field/agentfield/sdk/go/ai"

)

func main() {

a, _ := agent.New(agent.Config{

NodeID: "support-triage", // unique ID in the network

Version: "1.0.0",

AgentFieldURL: "http://localhost:8080",

AIConfig: &ai.Config{Model: "anthropic/claude-sonnet-4-20250514"},

})

// AI-powered — gets an LLM client automatically

a.RegisterReasoner("classify_ticket", func(ctx context.Context, input map[string]any) (any, error) {

subject, _ := input["subject"].(string)

body, _ := input["body"].(string)

return map[string]any{"priority": "high", "department": "billing", "summary": subject}, nil

})

// Deterministic — no AI, just business logic

a.RegisterSkill("escalation_policy", func(ctx context.Context, input map[string]any) (any, error) {

sla := map[string]int{"critical": 15, "high": 60, "normal": 240, "low": 1440}

priority, _ := input["priority"].(string)

return map[string]any{"sla_minutes": sla[priority]}, nil

})

log.Fatal(a.Run(context.Background())) // starts HTTP server + registers with control plane

}

```

---

**What just happened**

- One `Agent` instance exposed both AI and deterministic operations

- The reasoner got model access, validation, and workflow context automatically

- The deterministic function became a separate callable endpoint without extra server code

- In all three SDKs, deterministic endpoints can be registered separately from AI-powered reasoners

- The memory write used the same execution context as the reasoner

Example generated surface:

```text

Python/TypeScript:

POST /reasoners/classify_ticket

POST /skills/escalation_policy

target: support-triage.classify_ticket

Go equivalent:

POST /reasoners/classify_ticket

POST /skills/escalation_policy

```

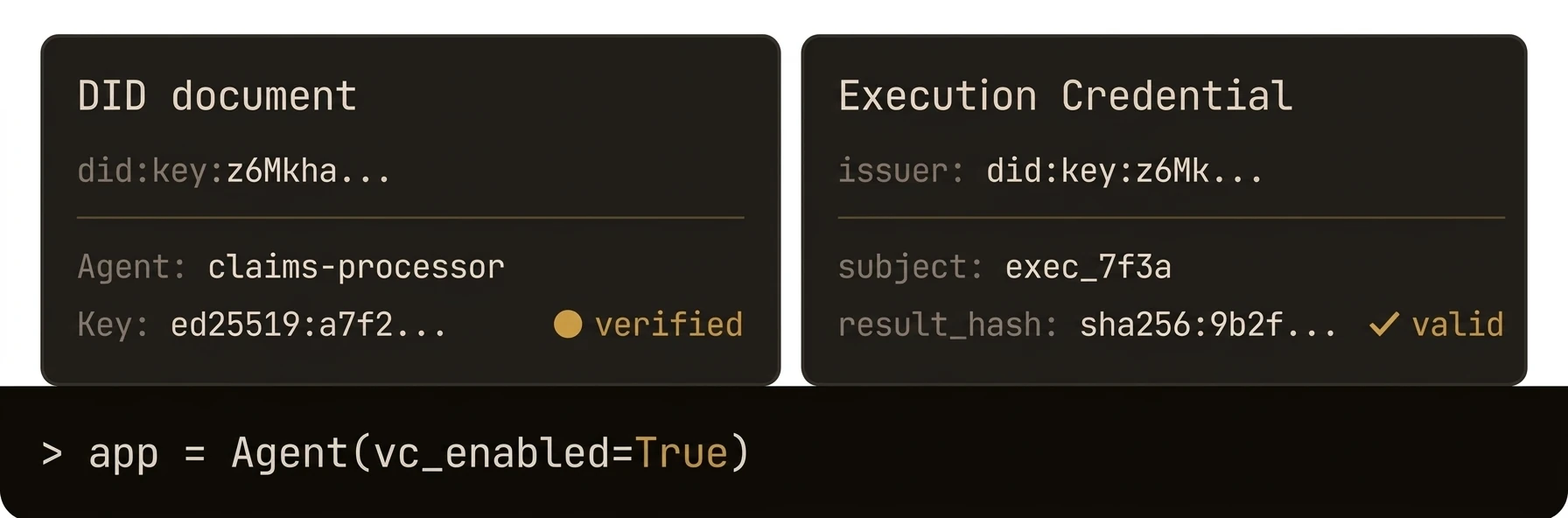

### What You Get

- **HTTP server** with auto-generated REST endpoints for every reasoner and skill

- **Control plane registration** with heartbeat, lease renewal, and graceful shutdown

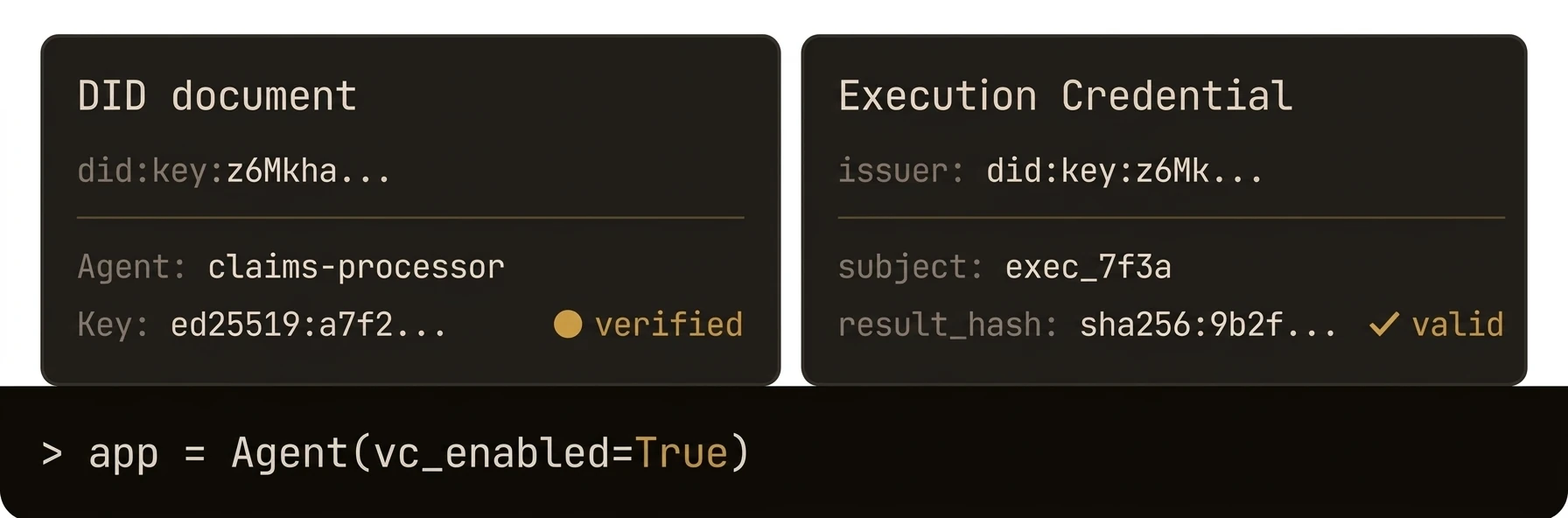

- **Cryptographic identity** via automatic DID registration and verifiable credentials

- **Cross-agent communication** through the AgentField execution gateway

- **Built-in AI client** for structured LLM output with any provider

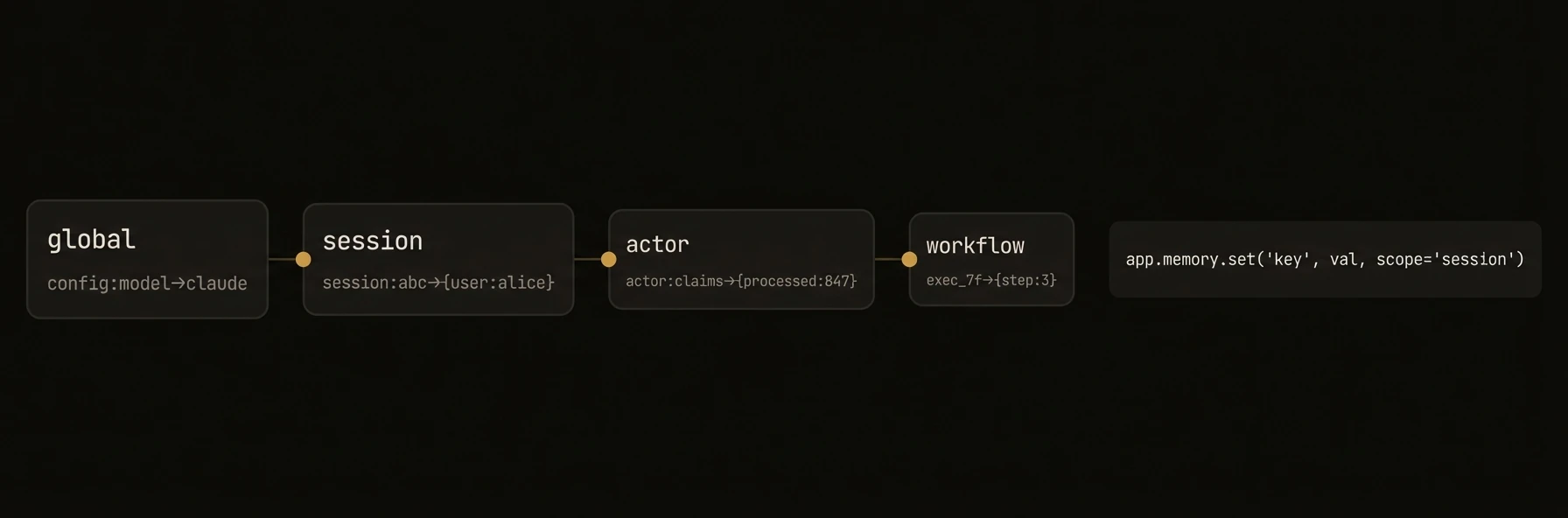

- **Memory system** for distributed state across workflows and sessions

- **CLI mode** for local testing and interactive debugging

### Constructor Parameters

### Python

`Agent` extends FastAPI. All FastAPI constructor parameters are also accepted.

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `node_id` | `str` | **required** | Unique identifier for this agent node |

| `agentfield_server` | `str \| None` | `"http://localhost:8080"` | Control plane URL. Also reads `AGENTFIELD_SERVER` env var |

| `version` | `str` | `"1.0.0"` | Agent version string |

| `description` | `str \| None` | `None` | Human-readable description |

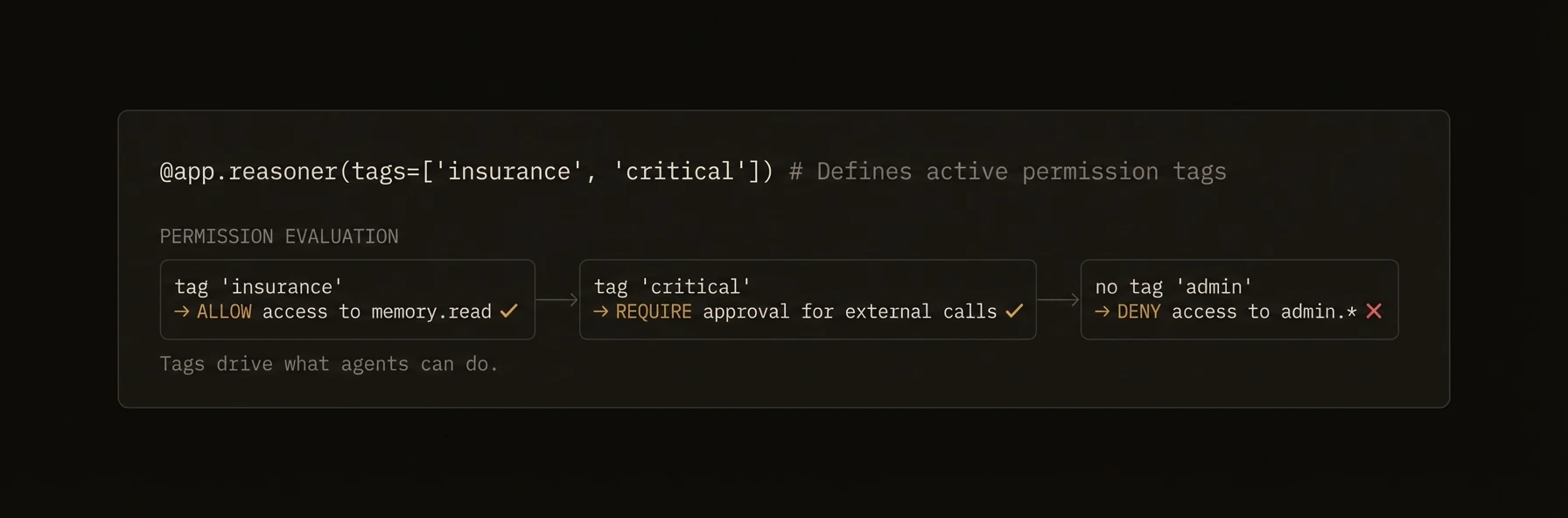

| `tags` | `list[str] \| None` | `None` | Metadata labels for policy and discovery |

| `ai_config` | `AIConfig \| None` | `None` | LLM provider configuration |

| `harness_config` | `HarnessConfig \| None` | `None` | Configuration for coding agent harness |

| `memory_config` | `MemoryConfig \| None` | `None` | Memory scope and TTL defaults |

| `dev_mode` | `bool` | `False` | Enable verbose logging |

| `callback_url` | `str \| None` | auto-detected | URL the control plane uses to reach this agent |

| `auto_register` | `bool` | `True` | Register with control plane on startup |

| `vc_enabled` | `bool \| None` | `True` | Enable verifiable credential generation |

| `api_key` | `str \| None` | `None` | API key for control plane auth |

| `enable_mcp` | `bool` | `False` | Enable MCP server integration |

| `enable_did` | `bool` | `True` | Enable DID-based identity |

| `local_verification` | `bool` | `False` | Enable decentralized request verification |

### TypeScript

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `nodeId` | `string` | **required** | Unique identifier for this agent node |

| `agentFieldUrl` | `string` | `"http://localhost:8080"` | Control plane URL |

| `port` | `number` | `8001` | HTTP server port |

| `host` | `string` | `"0.0.0.0"` | HTTP server bind address |

| `version` | `string` | `undefined` | Agent version string |

| `teamId` | `string` | `undefined` | Team grouping identifier |

| `aiConfig` | `AIConfig` | `undefined` | LLM provider configuration |

| `harnessConfig` | `HarnessConfig` | `undefined` | Coding agent harness configuration |

| `memoryConfig` | `MemoryConfig` | `undefined` | Memory scope and TTL defaults |

| `didEnabled` | `boolean` | `true` | Enable DID-based identity |

| `devMode` | `boolean` | `undefined` | Enable verbose logging |

| `deploymentType` | `"long_running" \| "serverless"` | `"long_running"` | Execution mode |

| `mcp` | `MCPConfig` | `undefined` | MCP server configuration |

| `localVerification` | `boolean` | `undefined` | Enable decentralized request verification |

| `tags` | `string[]` | `undefined` | Metadata labels for policy and discovery |

### Go

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `NodeID` | `string` | **required** | Unique identifier for this agent node |

| `Version` | `string` | **required** | Agent version string |

| `TeamID` | `string` | `"default"` | Team grouping identifier |

| `AgentFieldURL` | `string` | `""` | Control plane URL |

| `ListenAddress` | `string` | `":8001"` | HTTP server bind address |

| `PublicURL` | `string` | auto-generated | URL the control plane uses to reach this agent |

| `Token` | `string` | `""` | Bearer token for control plane auth |

| `DeploymentType` | `string` | `"long_running"` | Execution mode |

| `LeaseRefreshInterval` | `time.Duration` | `2m` | Heartbeat frequency |

| `AIConfig` | `*ai.Config` | `nil` | LLM provider configuration |

| `HarnessConfig` | `*HarnessConfig` | `nil` | Coding agent harness configuration |

| `MemoryBackend` | `MemoryBackend` | in-memory | Custom memory storage backend |

| `EnableDID` | `bool` | `false` | Enable automatic DID registration |

| `VCEnabled` | `bool` | `false` | Enable verifiable credential generation |

| `Tags` | `[]string` | `nil` | Metadata labels for policy and discovery |

| `LocalVerification` | `bool` | `false` | Enable decentralized request verification |

| `RequireOriginAuth` | `bool` | `false` | Validate incoming requests against token |

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Create agent | `Agent(node_id=...)` | `new Agent({ nodeId })` | `agent.New(agent.Config{NodeID: ..., AgentFieldURL: ...})` |

| Register reasoner | `@app.reasoner()` | `agent.reasoner(name, handler)` | `a.RegisterReasoner(name, handler)` |

| Register skill | `@app.skill()` | `agent.skill(name, handler)` | N/A (use `RegisterReasoner`) |

| Include router | `app.include_router(r)` | `agent.includeRouter(r)` | N/A |

| Start server | `app.serve(port=8001)` | `agent.serve()` | `a.Serve(ctx)` |

| Auto-detect mode | `app.run()` | N/A | `a.Run(ctx)` |

| Call another agent | `await app.call("agent.fn", **input)` | `await agent.call("agent.fn", input)` | `a.Call(ctx, "agent.fn", input)` |

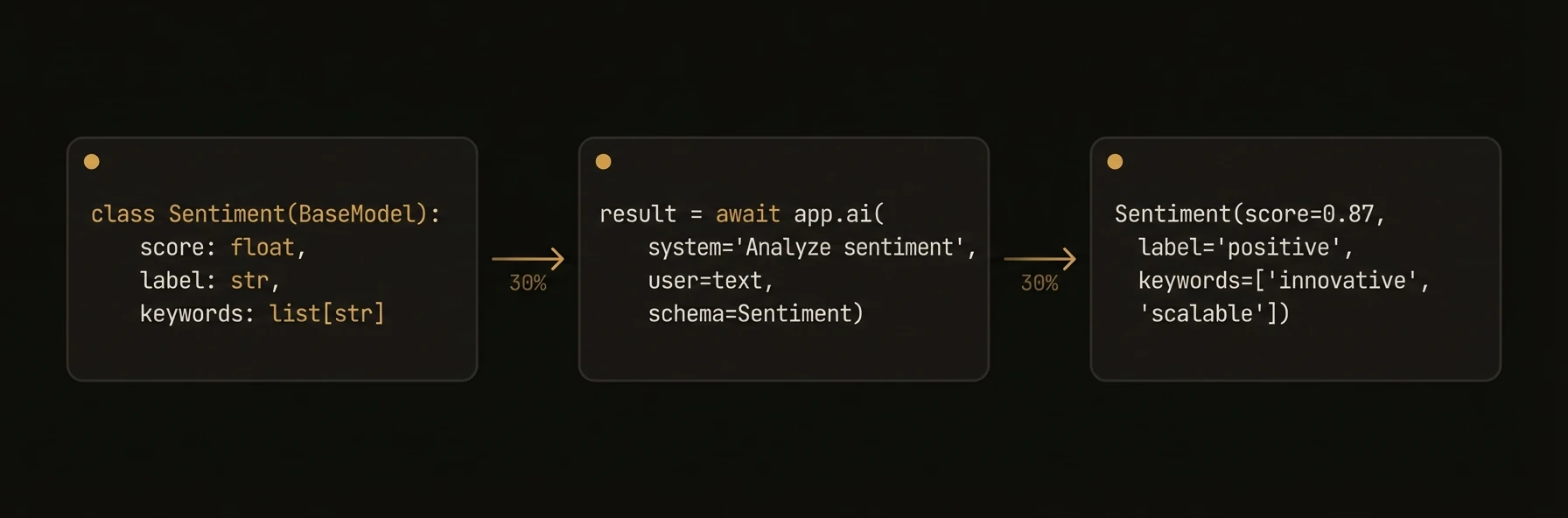

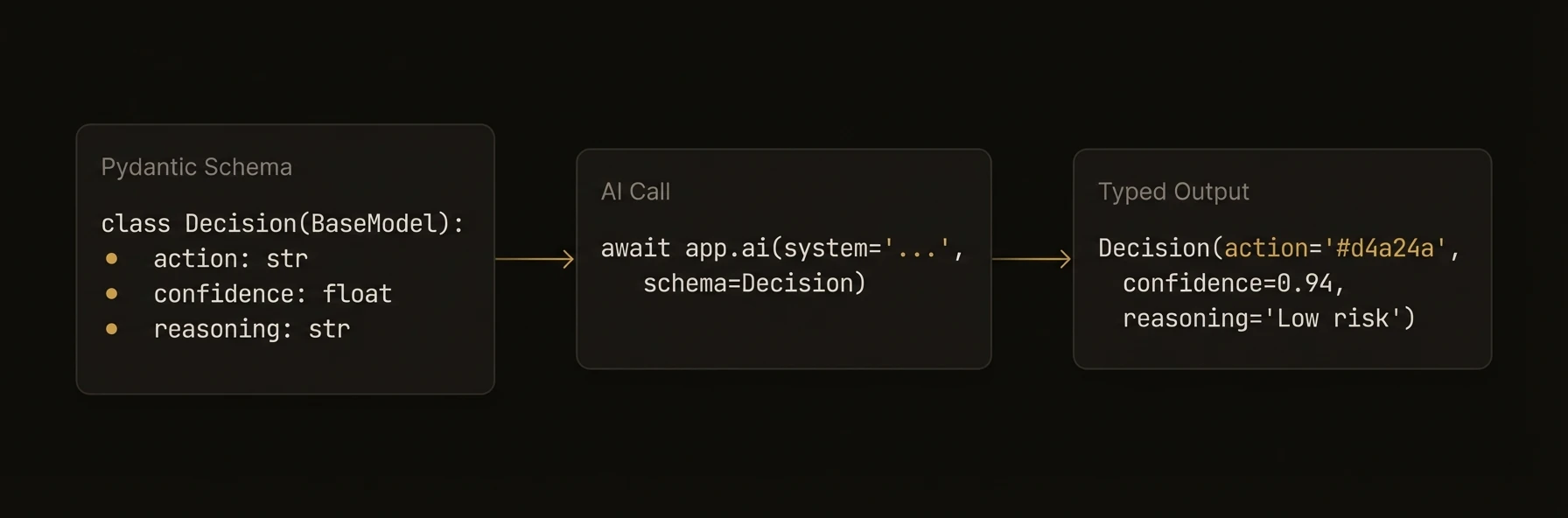

| AI structured output | `await app.ai(user=..., schema=Model)` | `await ctx.ai(prompt, { schema })` | `a.AI(ctx, prompt, opts)` |

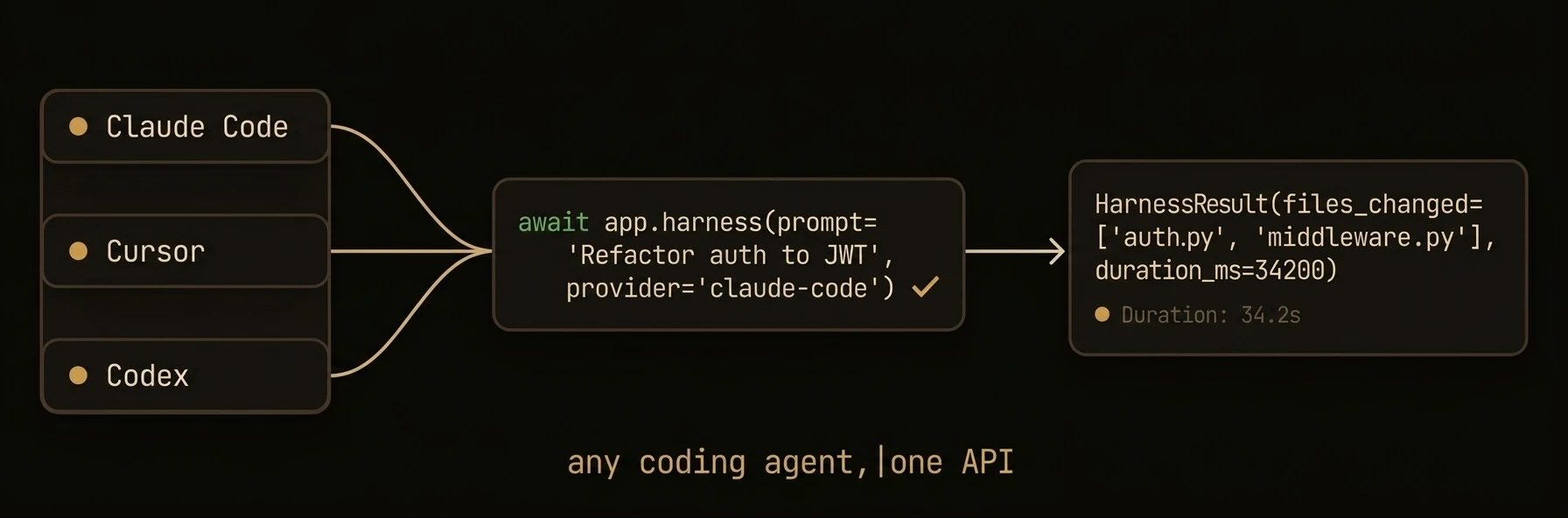

| Run harness | `await app.harness(prompt)` | `await agent.harness(prompt)` | `a.Harness(ctx, prompt, schema, dest, opts)` |

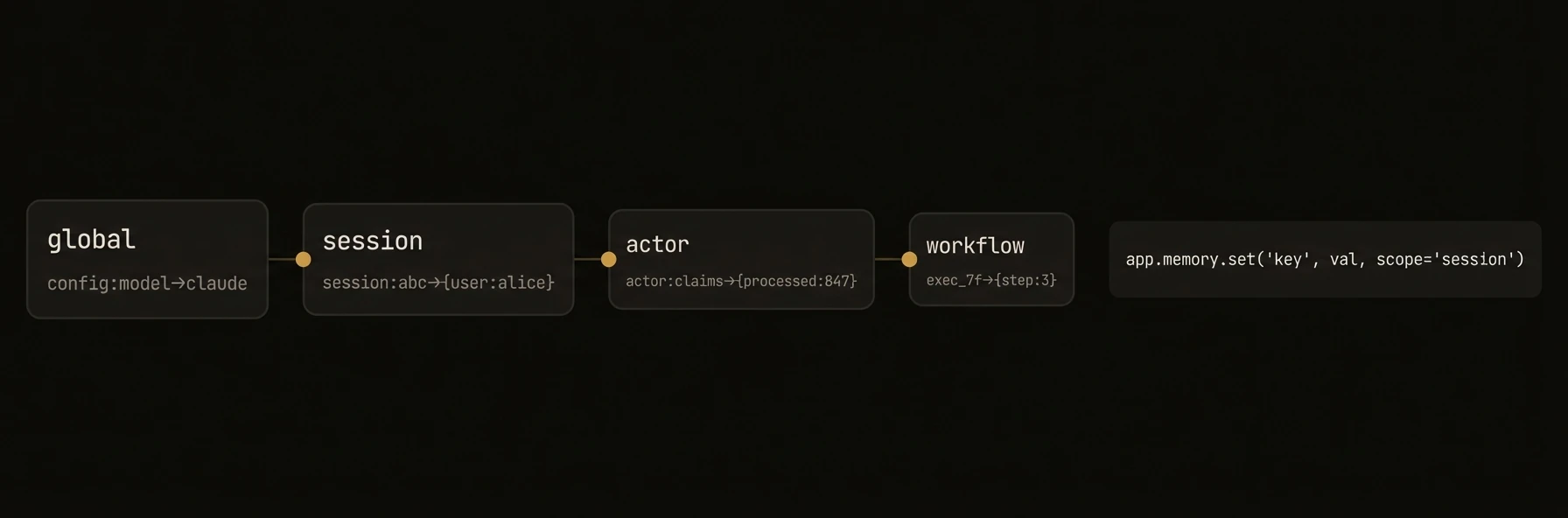

| Access memory | `app.memory.set(key, val)` | `ctx.memory` | `a.Memory()` |

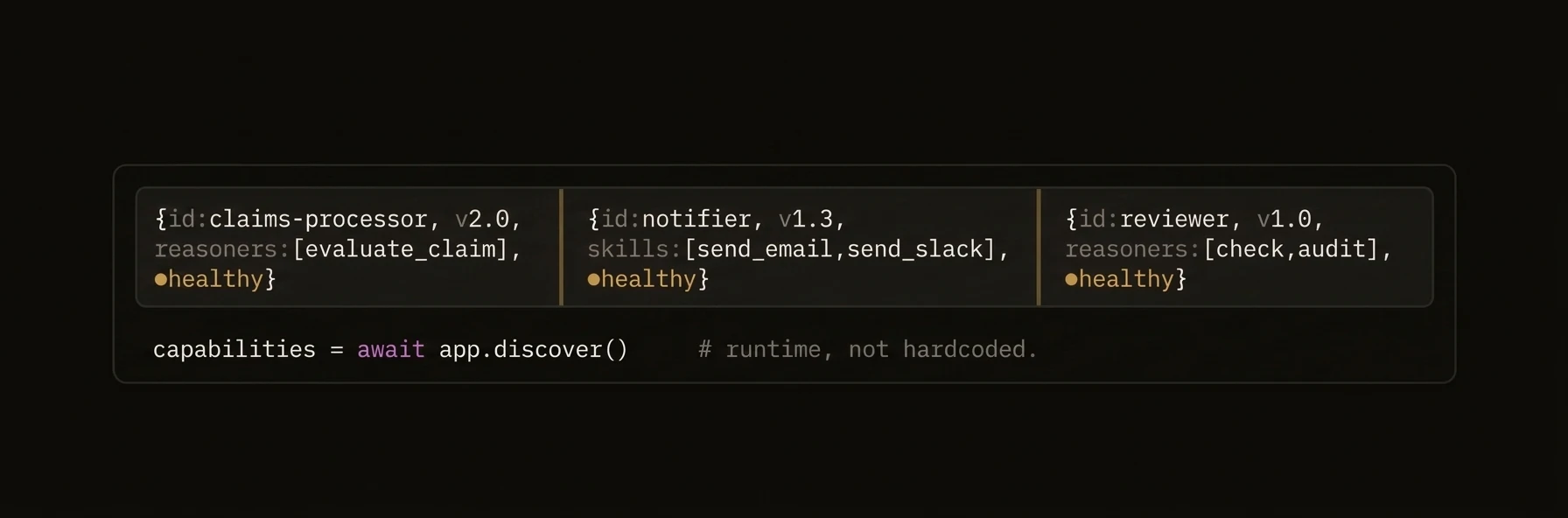

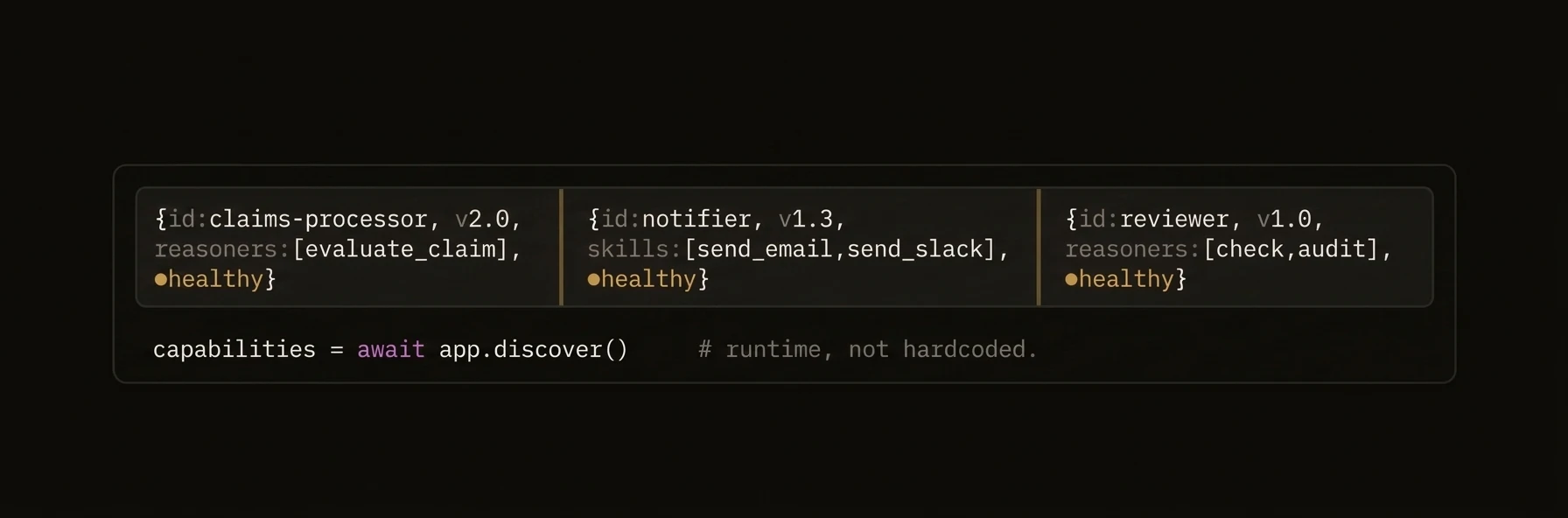

| Discover agents | `app.discover()` | `await agent.discover()` | `a.Discover(ctx)` |

| Shutdown | automatic on SIGTERM | `await agent.shutdown()` | automatic on SIGTERM |

### Patterns

### Environment-based configuration

### Python

```python

import os

from agentfield import Agent, AIConfig

app = Agent(

node_id=os.getenv("AGENT_ID", "my-agent"),

agentfield_server=os.getenv("AGENTFIELD_SERVER"),

ai_config=AIConfig(

model=os.getenv("LLM_MODEL", "openai/gpt-4o"),

),

dev_mode=os.getenv("DEV_MODE", "false").lower() == "true",

)

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

const agent = new Agent({

nodeId: process.env.AGENT_ID ?? 'my-agent',

agentFieldUrl: process.env.AGENTFIELD_URL ?? 'http://localhost:8080',

aiConfig: {

provider: 'openai',

model: process.env.LLM_MODEL ?? 'gpt-4o',

},

devMode: process.env.DEV_MODE === 'true',

});

```

### Go

```go

cfg := agent.Config{

NodeID: envOrDefault("AGENT_ID", "my-agent"),

Version: "1.0.0",

AgentFieldURL: os.Getenv("AGENTFIELD_URL"),

AIConfig: &ai.Config{Model: envOrDefault("LLM_MODEL", "gpt-4o")},

}

a, err := agent.New(cfg)

```

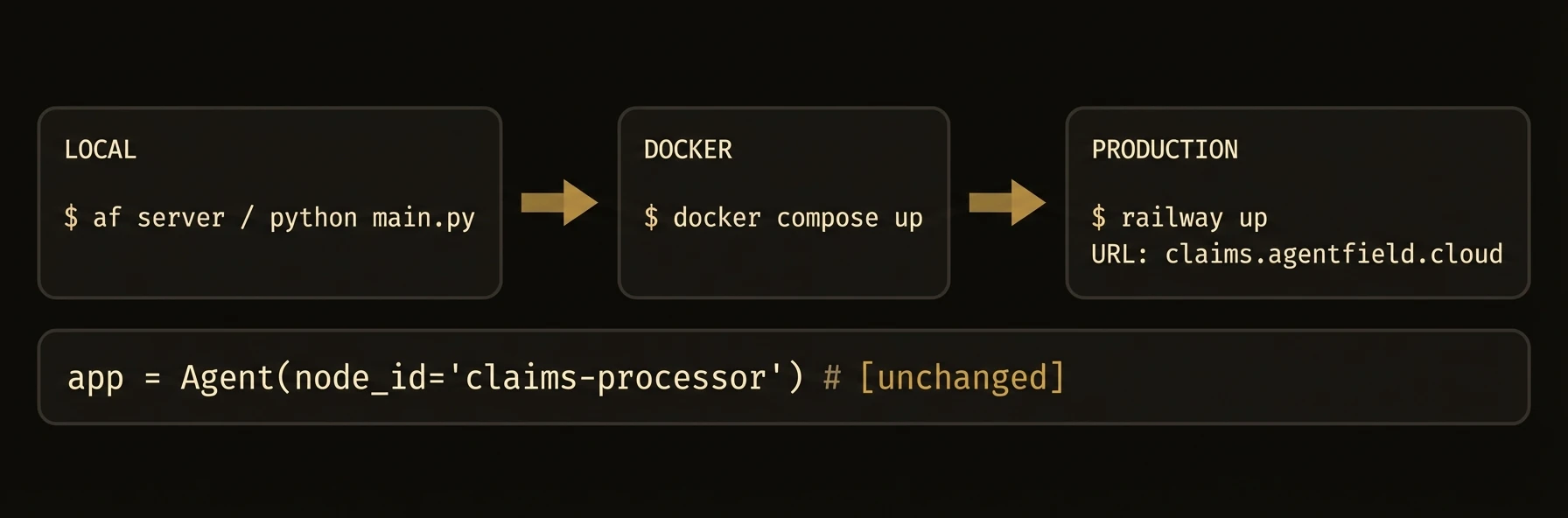

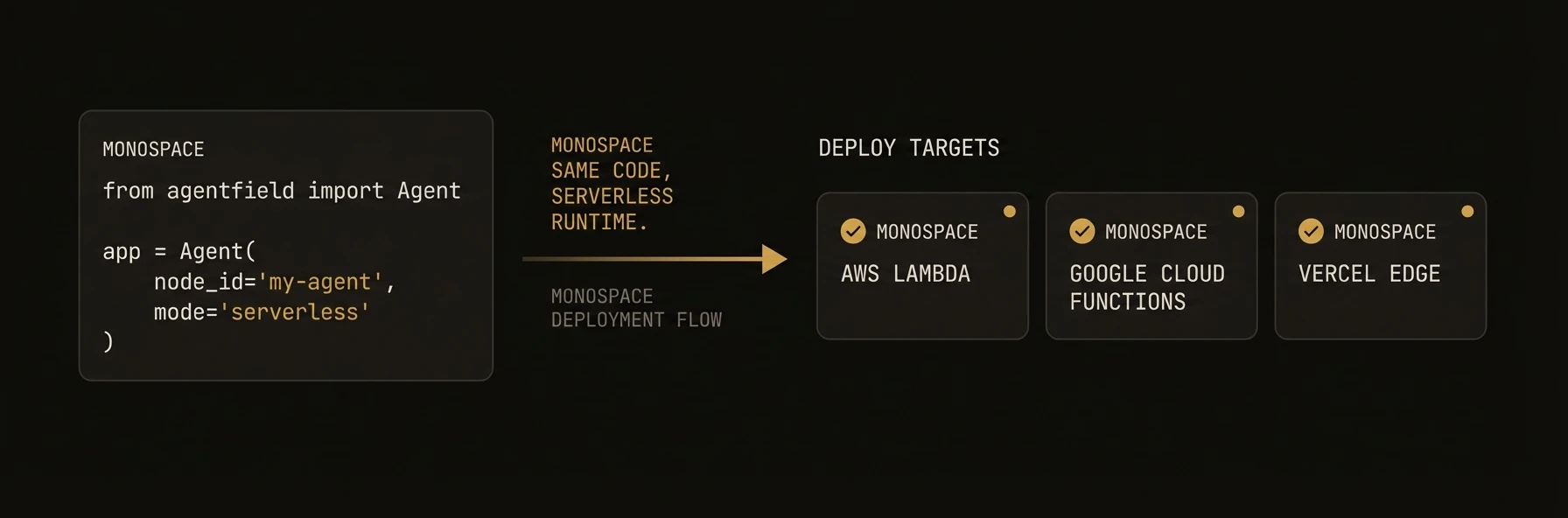

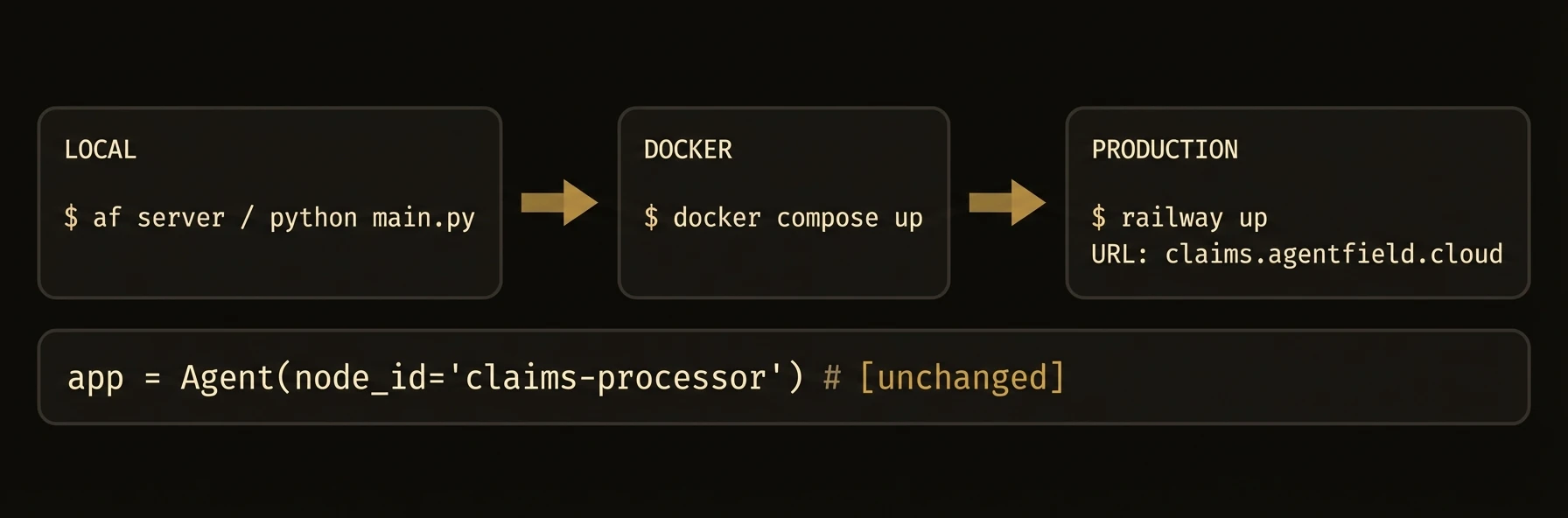

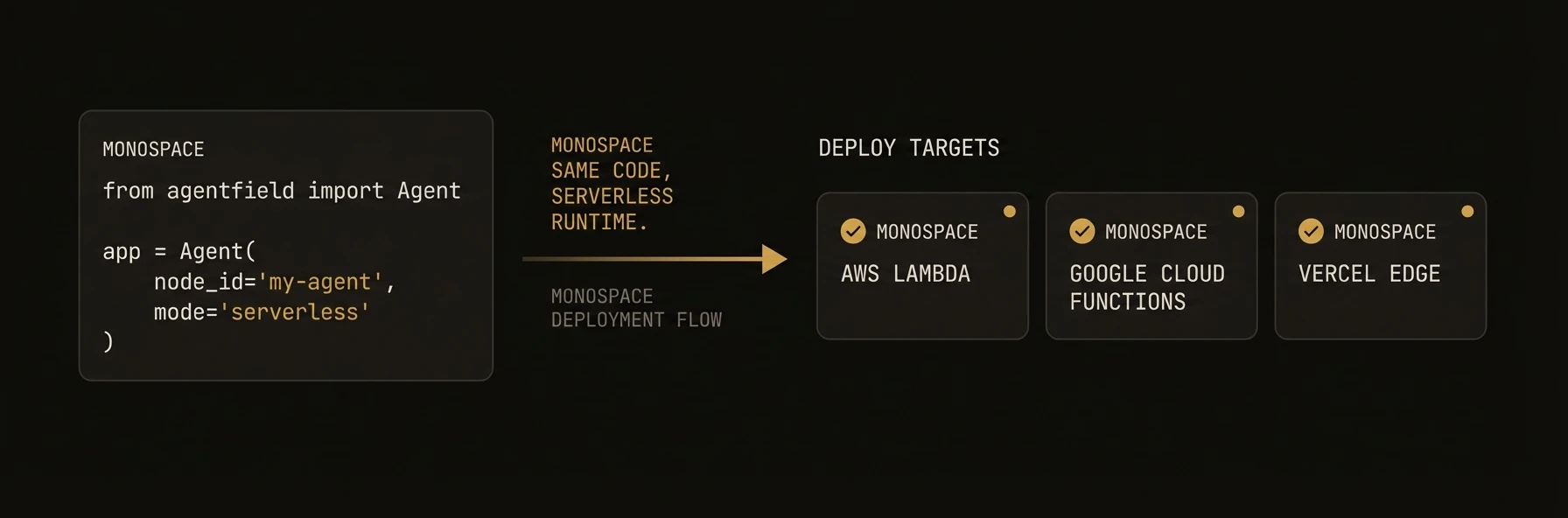

### Serverless deployment

### Python

```python

from agentfield import Agent

app = Agent(

node_id="serverless-agent",

callback_url="https://my-function.vercel.app",

)

@app.reasoner()

async def process(data: dict) -> dict:

return {"processed": True, **data}

# Export the FastAPI app for serverless platforms

# Vercel, Railway, etc. use this directly

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

const agent = new Agent({

nodeId: 'serverless-agent',

deploymentType: 'serverless',

});

agent.reasoner('process', async (ctx) => {

return { processed: true, ...ctx.input };

});

// Export handler for Lambda, Cloud Functions, etc.

export const handler = agent.handler();

```

### Cross-agent communication

### Python

```python

@app.reasoner()

async def orchestrate(task: str) -> dict:

# Call another agent's reasoner through the control plane

analysis = await app.call("analyzer-agent.analyze", text=task)

summary = await app.call("summarizer-agent.summarize", data=analysis)

return {"task": task, "result": summary}

```

### TypeScript

```typescript

agent.reasoner('orchestrate', async (ctx) => {

const analysis = await ctx.call('analyzer-agent.analyze', { text: ctx.input.task });

const summary = await ctx.call('summarizer-agent.summarize', { data: analysis });

return { task: ctx.input.task, result: summary };

});

```

### Go

```go

a.RegisterReasoner("orchestrate", func(ctx context.Context, input map[string]any) (any, error) {

task, _ := input["task"].(string)

analysis, err := a.Call(ctx, "analyzer-agent.analyze", map[string]any{"text": task})

if err != nil {

return nil, err

}

summary, err := a.Call(ctx, "summarizer-agent.summarize", map[string]any{"data": analysis})

if err != nil {

return nil, err

}

return map[string]any{"task": task, "result": summary}, nil

})

```

### Constructor Parameters

### Python

`Agent` extends FastAPI. All FastAPI constructor parameters are also accepted.

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `node_id` | `str` | **required** | Unique identifier for this agent node |

| `agentfield_server` | `str \| None` | `"http://localhost:8080"` | Control plane URL. Also reads `AGENTFIELD_SERVER` env var |

| `version` | `str` | `"1.0.0"` | Agent version string |

| `description` | `str \| None` | `None` | Human-readable description |

| `tags` | `list[str] \| None` | `None` | Metadata labels for policy and discovery |

| `ai_config` | `AIConfig \| None` | `None` | LLM provider configuration |

| `harness_config` | `HarnessConfig \| None` | `None` | Configuration for coding agent harness |

| `memory_config` | `MemoryConfig \| None` | `None` | Memory scope and TTL defaults |

| `dev_mode` | `bool` | `False` | Enable verbose logging |

| `callback_url` | `str \| None` | auto-detected | URL the control plane uses to reach this agent |

| `auto_register` | `bool` | `True` | Register with control plane on startup |

| `vc_enabled` | `bool \| None` | `True` | Enable verifiable credential generation |

| `api_key` | `str \| None` | `None` | API key for control plane auth |

| `enable_mcp` | `bool` | `False` | Enable MCP server integration |

| `enable_did` | `bool` | `True` | Enable DID-based identity |

| `local_verification` | `bool` | `False` | Enable decentralized request verification |

### TypeScript

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `nodeId` | `string` | **required** | Unique identifier for this agent node |

| `agentFieldUrl` | `string` | `"http://localhost:8080"` | Control plane URL |

| `port` | `number` | `8001` | HTTP server port |

| `host` | `string` | `"0.0.0.0"` | HTTP server bind address |

| `version` | `string` | `undefined` | Agent version string |

| `teamId` | `string` | `undefined` | Team grouping identifier |

| `aiConfig` | `AIConfig` | `undefined` | LLM provider configuration |

| `harnessConfig` | `HarnessConfig` | `undefined` | Coding agent harness configuration |

| `memoryConfig` | `MemoryConfig` | `undefined` | Memory scope and TTL defaults |

| `didEnabled` | `boolean` | `true` | Enable DID-based identity |

| `devMode` | `boolean` | `undefined` | Enable verbose logging |

| `deploymentType` | `"long_running" \| "serverless"` | `"long_running"` | Execution mode |

| `mcp` | `MCPConfig` | `undefined` | MCP server configuration |

| `localVerification` | `boolean` | `undefined` | Enable decentralized request verification |

| `tags` | `string[]` | `undefined` | Metadata labels for policy and discovery |

### Go

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `NodeID` | `string` | **required** | Unique identifier for this agent node |

| `Version` | `string` | **required** | Agent version string |

| `TeamID` | `string` | `"default"` | Team grouping identifier |

| `AgentFieldURL` | `string` | `""` | Control plane URL |

| `ListenAddress` | `string` | `":8001"` | HTTP server bind address |

| `PublicURL` | `string` | auto-generated | URL the control plane uses to reach this agent |

| `Token` | `string` | `""` | Bearer token for control plane auth |

| `DeploymentType` | `string` | `"long_running"` | Execution mode |

| `LeaseRefreshInterval` | `time.Duration` | `2m` | Heartbeat frequency |

| `AIConfig` | `*ai.Config` | `nil` | LLM provider configuration |

| `HarnessConfig` | `*HarnessConfig` | `nil` | Coding agent harness configuration |

| `MemoryBackend` | `MemoryBackend` | in-memory | Custom memory storage backend |

| `EnableDID` | `bool` | `false` | Enable automatic DID registration |

| `VCEnabled` | `bool` | `false` | Enable verifiable credential generation |

| `Tags` | `[]string` | `nil` | Metadata labels for policy and discovery |

| `LocalVerification` | `bool` | `false` | Enable decentralized request verification |

| `RequireOriginAuth` | `bool` | `false` | Validate incoming requests against token |

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Create agent | `Agent(node_id=...)` | `new Agent({ nodeId })` | `agent.New(agent.Config{NodeID: ...})` |

| Register reasoner | `@app.reasoner()` | `agent.reasoner(name, handler)` | `a.RegisterReasoner(name, handler)` |

| Register skill | `@app.skill()` | `agent.skill(name, handler)` | N/A (use `RegisterReasoner`) |

| Include router | `app.include_router(r)` | `agent.includeRouter(r)` | N/A |

| Start server | `app.serve(port=8001)` | `agent.serve()` | `a.Serve(ctx)` |

| Auto-detect mode | `app.run()` | N/A | `a.Run(ctx)` |

| Call another agent | `await app.call("agent.fn", **input)` | `await agent.call("agent.fn", input)` | `a.Call(ctx, "agent.fn", input)` |

| AI structured output | `await app.ai(user=..., schema=Model)` | `await ctx.ai(prompt, { schema })` | `a.AI(ctx, prompt, opts)` |

| Run harness | `await app.harness(prompt)` | `await agent.harness(prompt)` | `a.Harness(ctx, prompt, schema, dest, opts)` |

| Access memory | `app.memory.set(key, val)` | `ctx.memory` | `a.Memory()` |

| Discover agents | `app.discover()` | `await agent.discover()` | `a.Discover(ctx)` |

| Shutdown | automatic on SIGTERM | `await agent.shutdown()` | automatic on SIGTERM |

---

## Reasoners

URL: https://agentfield.ai/docs/build/building-blocks/reasoners

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/reasoners

Last-Modified: 2026-03-24T16:54:10.000Z

Category: building-blocks

Difficulty: beginner

Keywords: reasoner, ai, llm, decorator, handler, workflow, execution context

Summary: AI-powered functions with automatic workflow tracking, schema generation, and execution context

The top-level container that turns your code into a discoverable, governed, production microservice.

Without `Agent`, you would wire an HTTP server, registration, routing, identity, tracing, memory access, and cross-agent calls separately. With `Agent`, that infrastructure boundary is the object you instantiate.

### Python

```python

from agentfield import Agent, AIConfig

from pydantic import BaseModel

app = Agent(

node_id="support-triage", # unique ID in the network

ai_config=AIConfig(model="anthropic/claude-sonnet-4-20250514"),

)

class TicketClassification(BaseModel):

priority: str # "critical" | "high" | "normal" | "low"

department: str # route to the right team

summary: str # one-line summary for the queue

@app.reasoner() # AI-powered — gets an LLM client automatically

async def classify_ticket(subject: str, body: str, customer_id: str) -> TicketClassification:

result = await app.ai(

system="You triage customer support tickets.",

user=f"Subject: {subject}\n\n{body}",

schema=TicketClassification, # validated, typed output

)

await app.memory.set(f"ticket:{customer_id}:last_priority", result.priority)

return result

@app.skill() # deterministic — no AI, just business logic

def escalation_policy(priority: str) -> dict:

sla = {"critical": 15, "high": 60, "normal": 240, "low": 1440}

return {"sla_minutes": sla.get(priority, 240)}

app.run() # starts HTTP server + registers with control plane

# POST /reasoners/classify_ticket → AI classification

# POST /skills/escalation_policy → SLA lookup

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

import { z } from 'zod';

const agent = new Agent({

nodeId: 'support-triage', // unique ID in the network

aiConfig: { provider: 'anthropic', model: 'claude-sonnet-4-20250514' },

});

const TicketClassification = z.object({

priority: z.enum(['critical', 'high', 'normal', 'low']),

department: z.string(), // route to the right team

summary: z.string(), // one-line summary for the queue

});

agent.reasoner('classifyTicket', async (ctx) => { // AI-powered

const result = await ctx.ai(

`Subject: ${ctx.input.subject}\n\n${ctx.input.body}`,

{

system: 'You triage customer support tickets.',

schema: TicketClassification, // validated, typed output

},

);

await ctx.memory.set(`ticket:${ctx.input.customerId}:lastPriority`, result.priority);

return result;

});

agent.skill('escalationPolicy', (ctx) => { // deterministic — no AI

const sla: Record = { critical: 15, high: 60, normal: 240, low: 1440 };

return { slaMinutes: sla[ctx.input.priority] ?? 240 };

});

agent.serve(); // starts HTTP server + registers with control plane

```

### Go

```go

package main

import (

"context"

"log"

"github.com/Agent-Field/agentfield/sdk/go/agent"

"github.com/Agent-Field/agentfield/sdk/go/ai"

)

func main() {

a, _ := agent.New(agent.Config{

NodeID: "support-triage", // unique ID in the network

Version: "1.0.0",

AgentFieldURL: "http://localhost:8080",

AIConfig: &ai.Config{Model: "anthropic/claude-sonnet-4-20250514"},

})

// AI-powered — gets an LLM client automatically

a.RegisterReasoner("classify_ticket", func(ctx context.Context, input map[string]any) (any, error) {

subject, _ := input["subject"].(string)

body, _ := input["body"].(string)

return map[string]any{"priority": "high", "department": "billing", "summary": subject}, nil

})

// Deterministic — no AI, just business logic

a.RegisterSkill("escalation_policy", func(ctx context.Context, input map[string]any) (any, error) {

sla := map[string]int{"critical": 15, "high": 60, "normal": 240, "low": 1440}

priority, _ := input["priority"].(string)

return map[string]any{"sla_minutes": sla[priority]}, nil

})

log.Fatal(a.Run(context.Background())) // starts HTTP server + registers with control plane

}

```

---

**What just happened**

- One `Agent` instance exposed both AI and deterministic operations

- The reasoner got model access, validation, and workflow context automatically

- The deterministic function became a separate callable endpoint without extra server code

- In all three SDKs, deterministic endpoints can be registered separately from AI-powered reasoners

- The memory write used the same execution context as the reasoner

Example generated surface:

```text

Python/TypeScript:

POST /reasoners/classify_ticket

POST /skills/escalation_policy

target: support-triage.classify_ticket

Go equivalent:

POST /reasoners/classify_ticket

POST /skills/escalation_policy

```

### What You Get

- **HTTP server** with auto-generated REST endpoints for every reasoner and skill

- **Control plane registration** with heartbeat, lease renewal, and graceful shutdown

- **Cryptographic identity** via automatic DID registration and verifiable credentials

- **Cross-agent communication** through the AgentField execution gateway

- **Built-in AI client** for structured LLM output with any provider

- **Memory system** for distributed state across workflows and sessions

- **CLI mode** for local testing and interactive debugging

### Constructor Parameters

### Python

`Agent` extends FastAPI. All FastAPI constructor parameters are also accepted.

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `node_id` | `str` | **required** | Unique identifier for this agent node |

| `agentfield_server` | `str \| None` | `"http://localhost:8080"` | Control plane URL. Also reads `AGENTFIELD_SERVER` env var |

| `version` | `str` | `"1.0.0"` | Agent version string |

| `description` | `str \| None` | `None` | Human-readable description |

| `tags` | `list[str] \| None` | `None` | Metadata labels for policy and discovery |

| `ai_config` | `AIConfig \| None` | `None` | LLM provider configuration |

| `harness_config` | `HarnessConfig \| None` | `None` | Configuration for coding agent harness |

| `memory_config` | `MemoryConfig \| None` | `None` | Memory scope and TTL defaults |

| `dev_mode` | `bool` | `False` | Enable verbose logging |

| `callback_url` | `str \| None` | auto-detected | URL the control plane uses to reach this agent |

| `auto_register` | `bool` | `True` | Register with control plane on startup |

| `vc_enabled` | `bool \| None` | `True` | Enable verifiable credential generation |

| `api_key` | `str \| None` | `None` | API key for control plane auth |

| `enable_mcp` | `bool` | `False` | Enable MCP server integration |

| `enable_did` | `bool` | `True` | Enable DID-based identity |

| `local_verification` | `bool` | `False` | Enable decentralized request verification |

### TypeScript

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `nodeId` | `string` | **required** | Unique identifier for this agent node |

| `agentFieldUrl` | `string` | `"http://localhost:8080"` | Control plane URL |

| `port` | `number` | `8001` | HTTP server port |

| `host` | `string` | `"0.0.0.0"` | HTTP server bind address |

| `version` | `string` | `undefined` | Agent version string |

| `teamId` | `string` | `undefined` | Team grouping identifier |

| `aiConfig` | `AIConfig` | `undefined` | LLM provider configuration |

| `harnessConfig` | `HarnessConfig` | `undefined` | Coding agent harness configuration |

| `memoryConfig` | `MemoryConfig` | `undefined` | Memory scope and TTL defaults |

| `didEnabled` | `boolean` | `true` | Enable DID-based identity |

| `devMode` | `boolean` | `undefined` | Enable verbose logging |

| `deploymentType` | `"long_running" \| "serverless"` | `"long_running"` | Execution mode |

| `mcp` | `MCPConfig` | `undefined` | MCP server configuration |

| `localVerification` | `boolean` | `undefined` | Enable decentralized request verification |

| `tags` | `string[]` | `undefined` | Metadata labels for policy and discovery |

### Go

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `NodeID` | `string` | **required** | Unique identifier for this agent node |

| `Version` | `string` | **required** | Agent version string |

| `TeamID` | `string` | `"default"` | Team grouping identifier |

| `AgentFieldURL` | `string` | `""` | Control plane URL |

| `ListenAddress` | `string` | `":8001"` | HTTP server bind address |

| `PublicURL` | `string` | auto-generated | URL the control plane uses to reach this agent |

| `Token` | `string` | `""` | Bearer token for control plane auth |

| `DeploymentType` | `string` | `"long_running"` | Execution mode |

| `LeaseRefreshInterval` | `time.Duration` | `2m` | Heartbeat frequency |

| `AIConfig` | `*ai.Config` | `nil` | LLM provider configuration |

| `HarnessConfig` | `*HarnessConfig` | `nil` | Coding agent harness configuration |

| `MemoryBackend` | `MemoryBackend` | in-memory | Custom memory storage backend |

| `EnableDID` | `bool` | `false` | Enable automatic DID registration |

| `VCEnabled` | `bool` | `false` | Enable verifiable credential generation |

| `Tags` | `[]string` | `nil` | Metadata labels for policy and discovery |

| `LocalVerification` | `bool` | `false` | Enable decentralized request verification |

| `RequireOriginAuth` | `bool` | `false` | Validate incoming requests against token |

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Create agent | `Agent(node_id=...)` | `new Agent({ nodeId })` | `agent.New(agent.Config{NodeID: ..., AgentFieldURL: ...})` |

| Register reasoner | `@app.reasoner()` | `agent.reasoner(name, handler)` | `a.RegisterReasoner(name, handler)` |

| Register skill | `@app.skill()` | `agent.skill(name, handler)` | N/A (use `RegisterReasoner`) |

| Include router | `app.include_router(r)` | `agent.includeRouter(r)` | N/A |

| Start server | `app.serve(port=8001)` | `agent.serve()` | `a.Serve(ctx)` |

| Auto-detect mode | `app.run()` | N/A | `a.Run(ctx)` |

| Call another agent | `await app.call("agent.fn", **input)` | `await agent.call("agent.fn", input)` | `a.Call(ctx, "agent.fn", input)` |

| AI structured output | `await app.ai(user=..., schema=Model)` | `await ctx.ai(prompt, { schema })` | `a.AI(ctx, prompt, opts)` |

| Run harness | `await app.harness(prompt)` | `await agent.harness(prompt)` | `a.Harness(ctx, prompt, schema, dest, opts)` |

| Access memory | `app.memory.set(key, val)` | `ctx.memory` | `a.Memory()` |

| Discover agents | `app.discover()` | `await agent.discover()` | `a.Discover(ctx)` |

| Shutdown | automatic on SIGTERM | `await agent.shutdown()` | automatic on SIGTERM |

### Patterns

### Environment-based configuration

### Python

```python

import os

from agentfield import Agent, AIConfig

app = Agent(

node_id=os.getenv("AGENT_ID", "my-agent"),

agentfield_server=os.getenv("AGENTFIELD_SERVER"),

ai_config=AIConfig(

model=os.getenv("LLM_MODEL", "openai/gpt-4o"),

),

dev_mode=os.getenv("DEV_MODE", "false").lower() == "true",

)

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

const agent = new Agent({

nodeId: process.env.AGENT_ID ?? 'my-agent',

agentFieldUrl: process.env.AGENTFIELD_URL ?? 'http://localhost:8080',

aiConfig: {

provider: 'openai',

model: process.env.LLM_MODEL ?? 'gpt-4o',

},

devMode: process.env.DEV_MODE === 'true',

});

```

### Go

```go

cfg := agent.Config{

NodeID: envOrDefault("AGENT_ID", "my-agent"),

Version: "1.0.0",

AgentFieldURL: os.Getenv("AGENTFIELD_URL"),

AIConfig: &ai.Config{Model: envOrDefault("LLM_MODEL", "gpt-4o")},

}

a, err := agent.New(cfg)

```

### Serverless deployment

### Python

```python

from agentfield import Agent

app = Agent(

node_id="serverless-agent",

callback_url="https://my-function.vercel.app",

)

@app.reasoner()

async def process(data: dict) -> dict:

return {"processed": True, **data}

# Export the FastAPI app for serverless platforms

# Vercel, Railway, etc. use this directly

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

const agent = new Agent({

nodeId: 'serverless-agent',

deploymentType: 'serverless',

});

agent.reasoner('process', async (ctx) => {

return { processed: true, ...ctx.input };

});

// Export handler for Lambda, Cloud Functions, etc.

export const handler = agent.handler();

```

### Cross-agent communication

### Python

```python

@app.reasoner()

async def orchestrate(task: str) -> dict:

# Call another agent's reasoner through the control plane

analysis = await app.call("analyzer-agent.analyze", text=task)

summary = await app.call("summarizer-agent.summarize", data=analysis)

return {"task": task, "result": summary}

```

### TypeScript

```typescript

agent.reasoner('orchestrate', async (ctx) => {

const analysis = await ctx.call('analyzer-agent.analyze', { text: ctx.input.task });

const summary = await ctx.call('summarizer-agent.summarize', { data: analysis });

return { task: ctx.input.task, result: summary };

});

```

### Go

```go

a.RegisterReasoner("orchestrate", func(ctx context.Context, input map[string]any) (any, error) {

task, _ := input["task"].(string)

analysis, err := a.Call(ctx, "analyzer-agent.analyze", map[string]any{"text": task})

if err != nil {

return nil, err

}

summary, err := a.Call(ctx, "summarizer-agent.summarize", map[string]any{"data": analysis})

if err != nil {

return nil, err

}

return map[string]any{"task": task, "result": summary}, nil

})

```

### Constructor Parameters

### Python

`Agent` extends FastAPI. All FastAPI constructor parameters are also accepted.

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `node_id` | `str` | **required** | Unique identifier for this agent node |

| `agentfield_server` | `str \| None` | `"http://localhost:8080"` | Control plane URL. Also reads `AGENTFIELD_SERVER` env var |

| `version` | `str` | `"1.0.0"` | Agent version string |

| `description` | `str \| None` | `None` | Human-readable description |

| `tags` | `list[str] \| None` | `None` | Metadata labels for policy and discovery |

| `ai_config` | `AIConfig \| None` | `None` | LLM provider configuration |

| `harness_config` | `HarnessConfig \| None` | `None` | Configuration for coding agent harness |

| `memory_config` | `MemoryConfig \| None` | `None` | Memory scope and TTL defaults |

| `dev_mode` | `bool` | `False` | Enable verbose logging |

| `callback_url` | `str \| None` | auto-detected | URL the control plane uses to reach this agent |

| `auto_register` | `bool` | `True` | Register with control plane on startup |

| `vc_enabled` | `bool \| None` | `True` | Enable verifiable credential generation |

| `api_key` | `str \| None` | `None` | API key for control plane auth |

| `enable_mcp` | `bool` | `False` | Enable MCP server integration |

| `enable_did` | `bool` | `True` | Enable DID-based identity |

| `local_verification` | `bool` | `False` | Enable decentralized request verification |

### TypeScript

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `nodeId` | `string` | **required** | Unique identifier for this agent node |

| `agentFieldUrl` | `string` | `"http://localhost:8080"` | Control plane URL |

| `port` | `number` | `8001` | HTTP server port |

| `host` | `string` | `"0.0.0.0"` | HTTP server bind address |

| `version` | `string` | `undefined` | Agent version string |

| `teamId` | `string` | `undefined` | Team grouping identifier |

| `aiConfig` | `AIConfig` | `undefined` | LLM provider configuration |

| `harnessConfig` | `HarnessConfig` | `undefined` | Coding agent harness configuration |

| `memoryConfig` | `MemoryConfig` | `undefined` | Memory scope and TTL defaults |

| `didEnabled` | `boolean` | `true` | Enable DID-based identity |

| `devMode` | `boolean` | `undefined` | Enable verbose logging |

| `deploymentType` | `"long_running" \| "serverless"` | `"long_running"` | Execution mode |

| `mcp` | `MCPConfig` | `undefined` | MCP server configuration |

| `localVerification` | `boolean` | `undefined` | Enable decentralized request verification |

| `tags` | `string[]` | `undefined` | Metadata labels for policy and discovery |

### Go

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `NodeID` | `string` | **required** | Unique identifier for this agent node |

| `Version` | `string` | **required** | Agent version string |

| `TeamID` | `string` | `"default"` | Team grouping identifier |

| `AgentFieldURL` | `string` | `""` | Control plane URL |

| `ListenAddress` | `string` | `":8001"` | HTTP server bind address |

| `PublicURL` | `string` | auto-generated | URL the control plane uses to reach this agent |

| `Token` | `string` | `""` | Bearer token for control plane auth |

| `DeploymentType` | `string` | `"long_running"` | Execution mode |

| `LeaseRefreshInterval` | `time.Duration` | `2m` | Heartbeat frequency |

| `AIConfig` | `*ai.Config` | `nil` | LLM provider configuration |

| `HarnessConfig` | `*HarnessConfig` | `nil` | Coding agent harness configuration |

| `MemoryBackend` | `MemoryBackend` | in-memory | Custom memory storage backend |

| `EnableDID` | `bool` | `false` | Enable automatic DID registration |

| `VCEnabled` | `bool` | `false` | Enable verifiable credential generation |

| `Tags` | `[]string` | `nil` | Metadata labels for policy and discovery |

| `LocalVerification` | `bool` | `false` | Enable decentralized request verification |

| `RequireOriginAuth` | `bool` | `false` | Validate incoming requests against token |

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Create agent | `Agent(node_id=...)` | `new Agent({ nodeId })` | `agent.New(agent.Config{NodeID: ...})` |

| Register reasoner | `@app.reasoner()` | `agent.reasoner(name, handler)` | `a.RegisterReasoner(name, handler)` |

| Register skill | `@app.skill()` | `agent.skill(name, handler)` | N/A (use `RegisterReasoner`) |

| Include router | `app.include_router(r)` | `agent.includeRouter(r)` | N/A |

| Start server | `app.serve(port=8001)` | `agent.serve()` | `a.Serve(ctx)` |

| Auto-detect mode | `app.run()` | N/A | `a.Run(ctx)` |

| Call another agent | `await app.call("agent.fn", **input)` | `await agent.call("agent.fn", input)` | `a.Call(ctx, "agent.fn", input)` |

| AI structured output | `await app.ai(user=..., schema=Model)` | `await ctx.ai(prompt, { schema })` | `a.AI(ctx, prompt, opts)` |

| Run harness | `await app.harness(prompt)` | `await agent.harness(prompt)` | `a.Harness(ctx, prompt, schema, dest, opts)` |

| Access memory | `app.memory.set(key, val)` | `ctx.memory` | `a.Memory()` |

| Discover agents | `app.discover()` | `await agent.discover()` | `a.Discover(ctx)` |

| Shutdown | automatic on SIGTERM | `await agent.shutdown()` | automatic on SIGTERM |

---

## Reasoners

URL: https://agentfield.ai/docs/build/building-blocks/reasoners

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/reasoners

Last-Modified: 2026-03-24T16:54:10.000Z

Category: building-blocks

Difficulty: beginner

Keywords: reasoner, ai, llm, decorator, handler, workflow, execution context

Summary: AI-powered functions with automatic workflow tracking, schema generation, and execution context

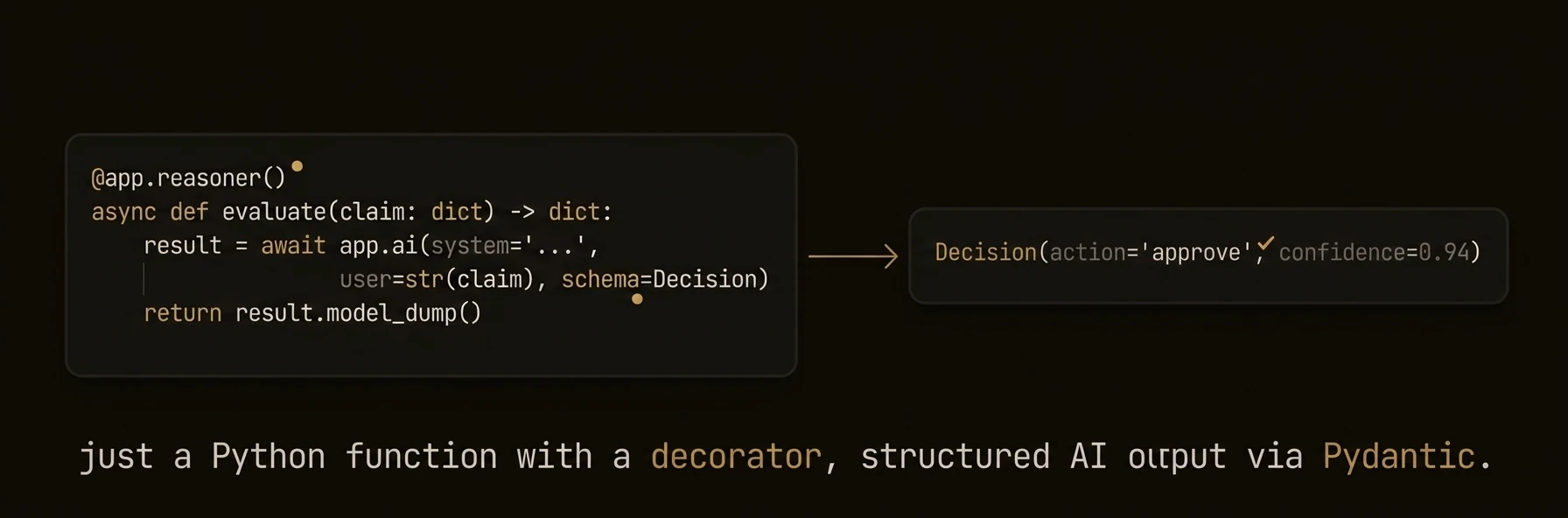

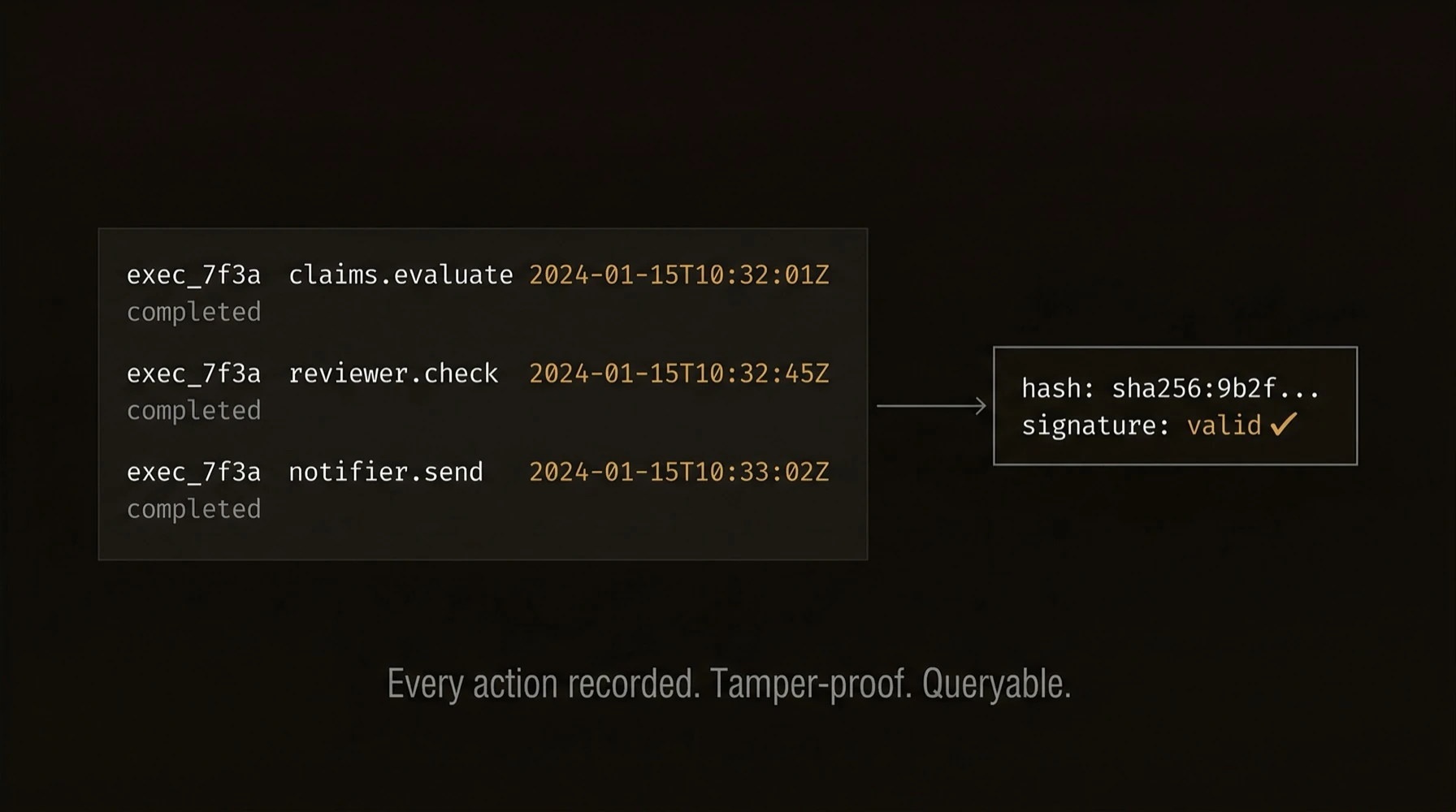

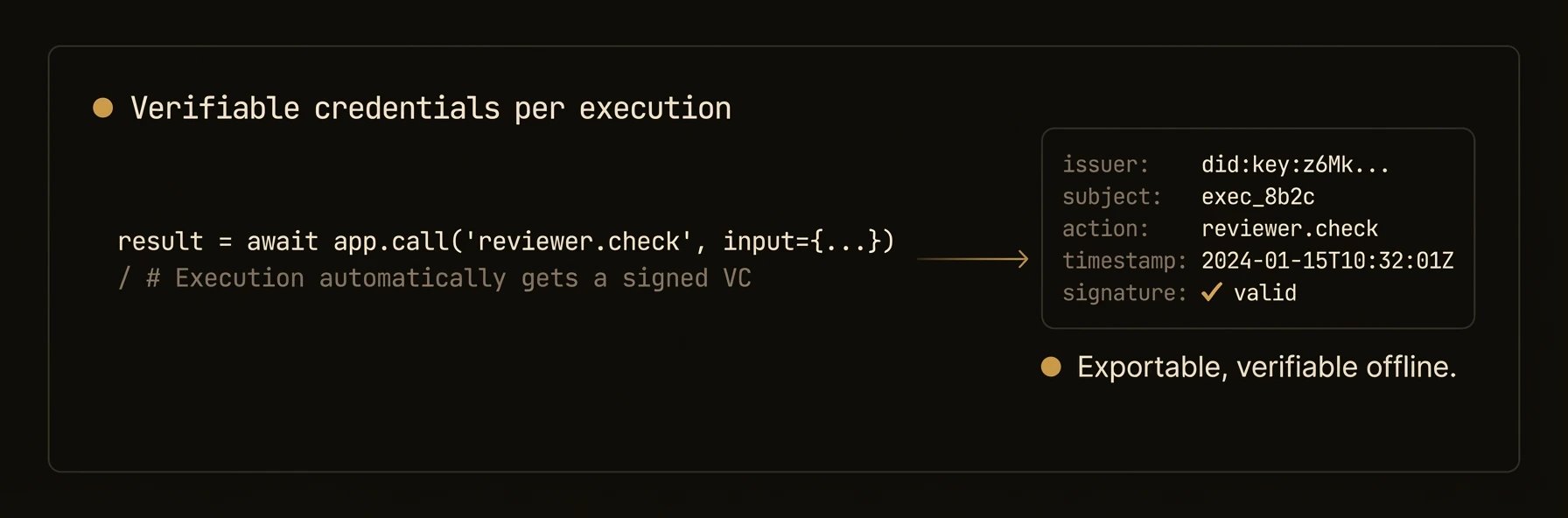

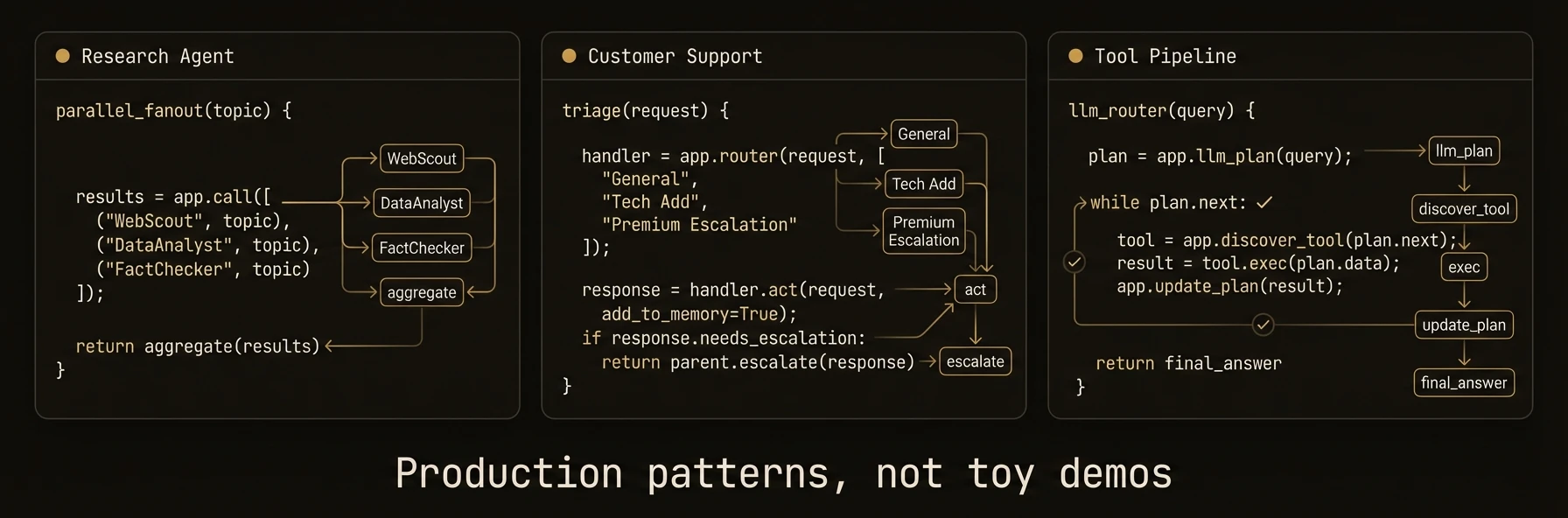

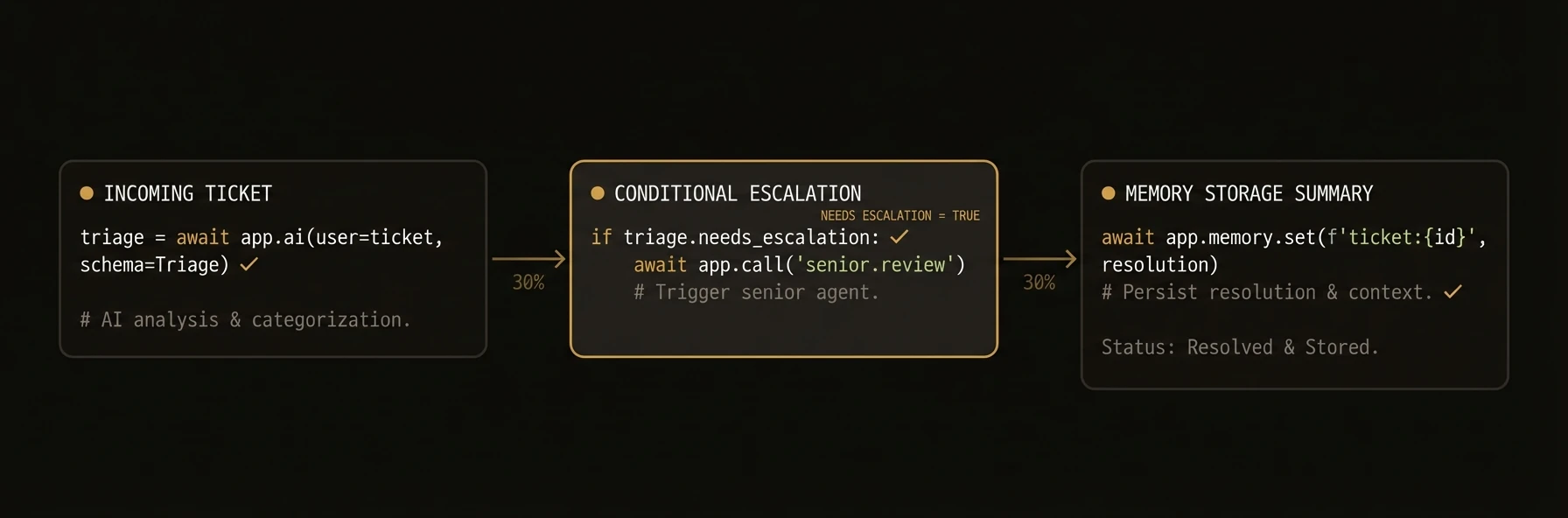

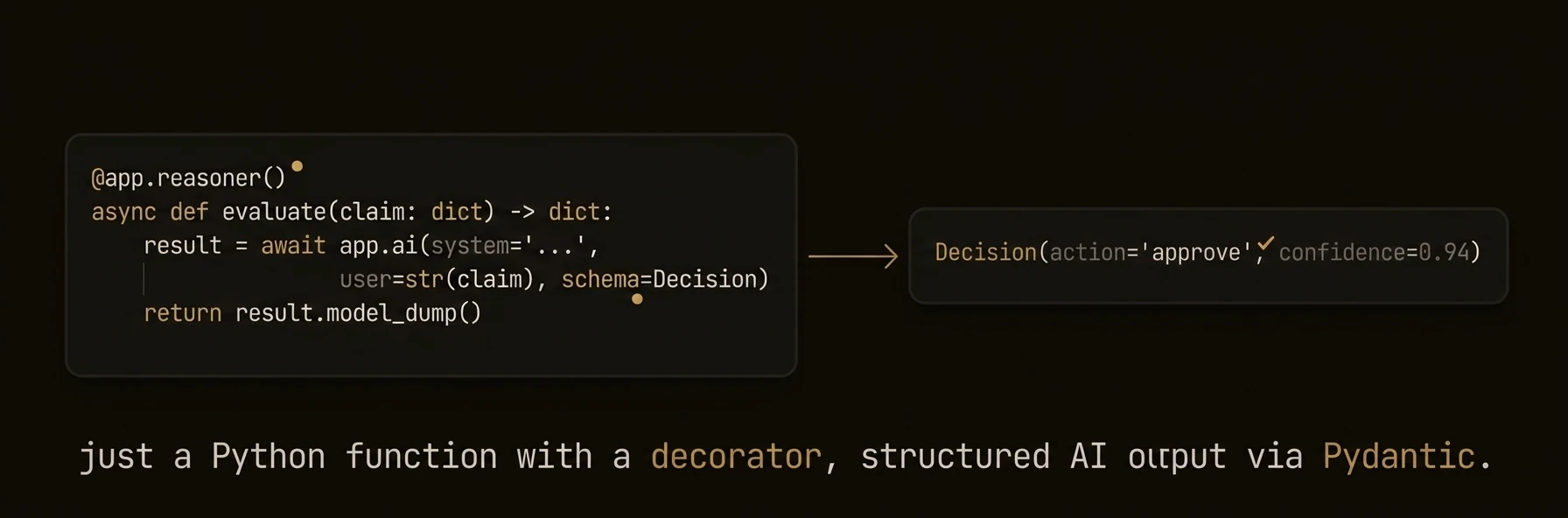

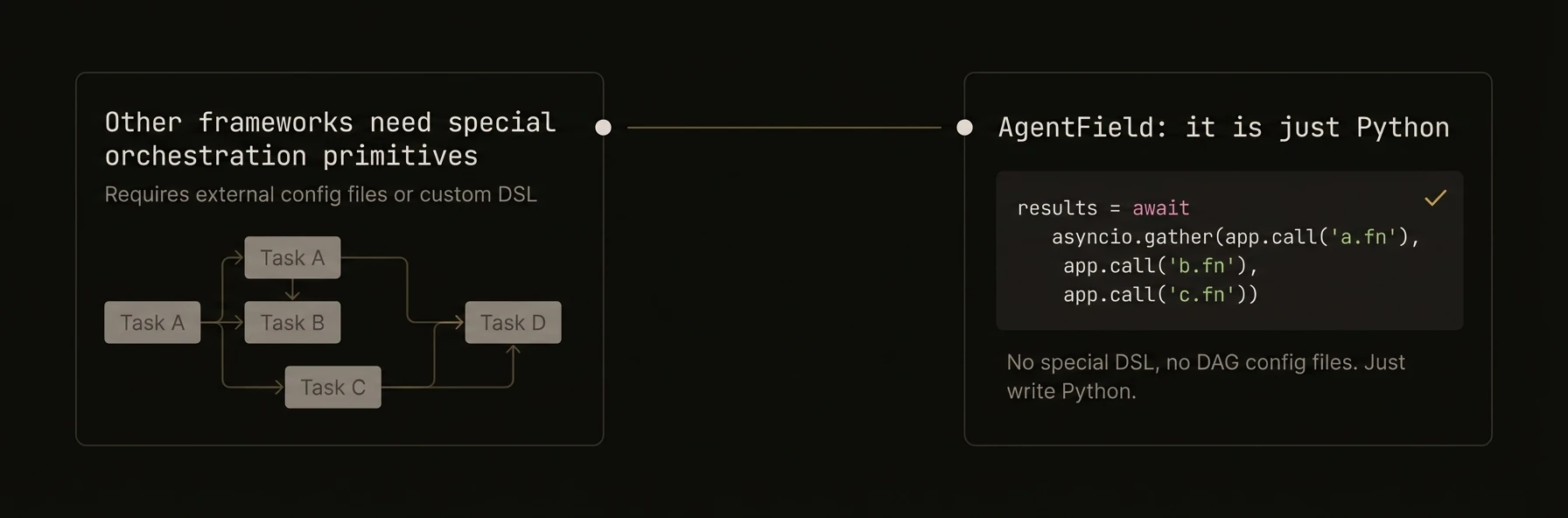

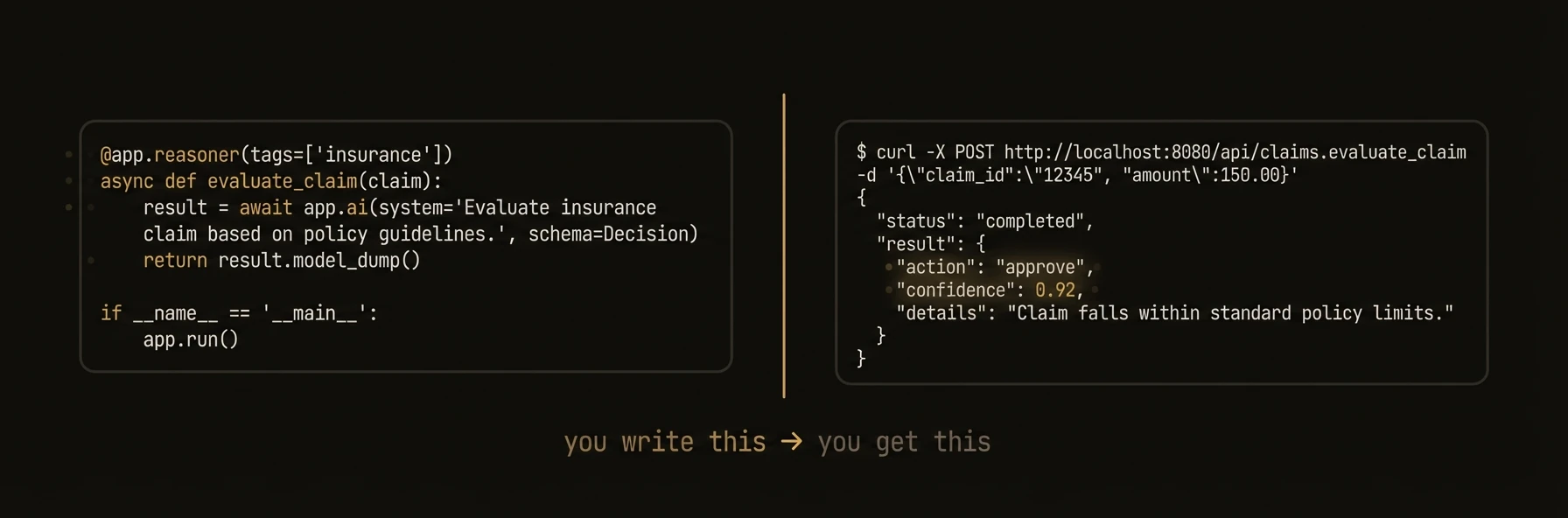

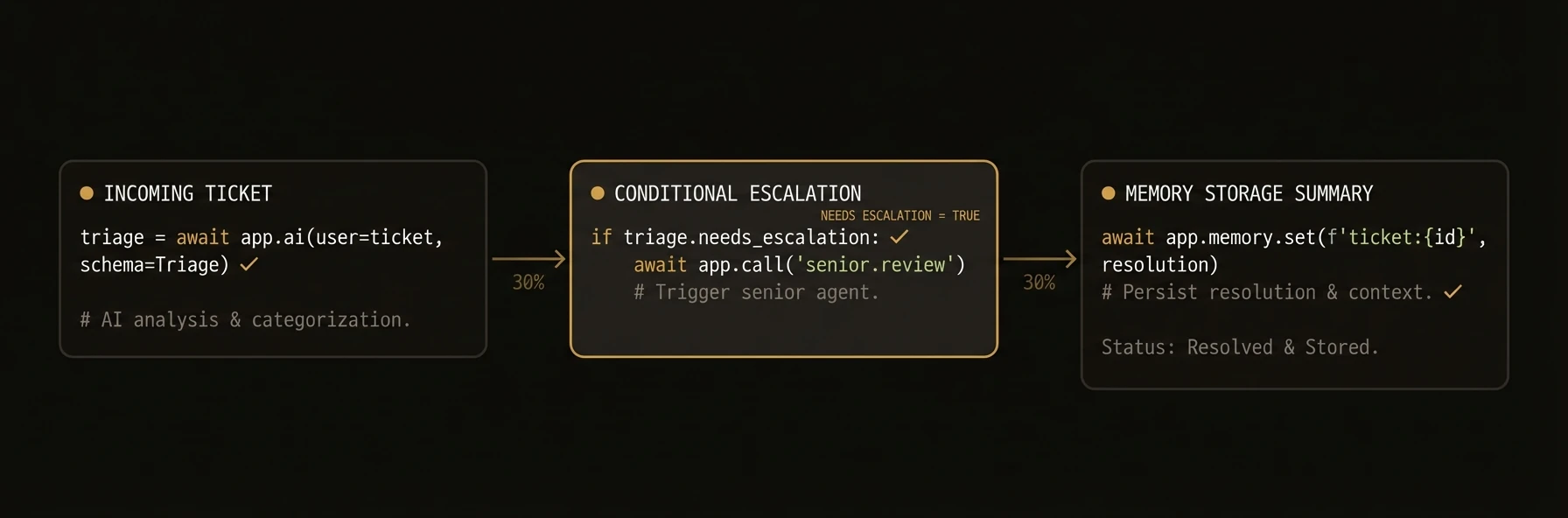

AI-powered functions that turn LLM calls into typed, tracked, auditable API endpoints.

The real production problem is not “how do I call a model?” It is “which parts of this workflow need AI judgment, and which parts need deterministic control?” Reasoners are where that boundary lives.

They are not just LLM wrappers. A reasoner combines AI analysis with your routing, validation, escalation, and side effects, then runs that workflow with full execution context and auditability.

### Python

### TypeScript

### Go

---

**What just happened**

- AI produced a typed triage decision instead of free-form text

- Your code handled the escalation side effect deterministically

- The reasoner emitted an execution note for observability

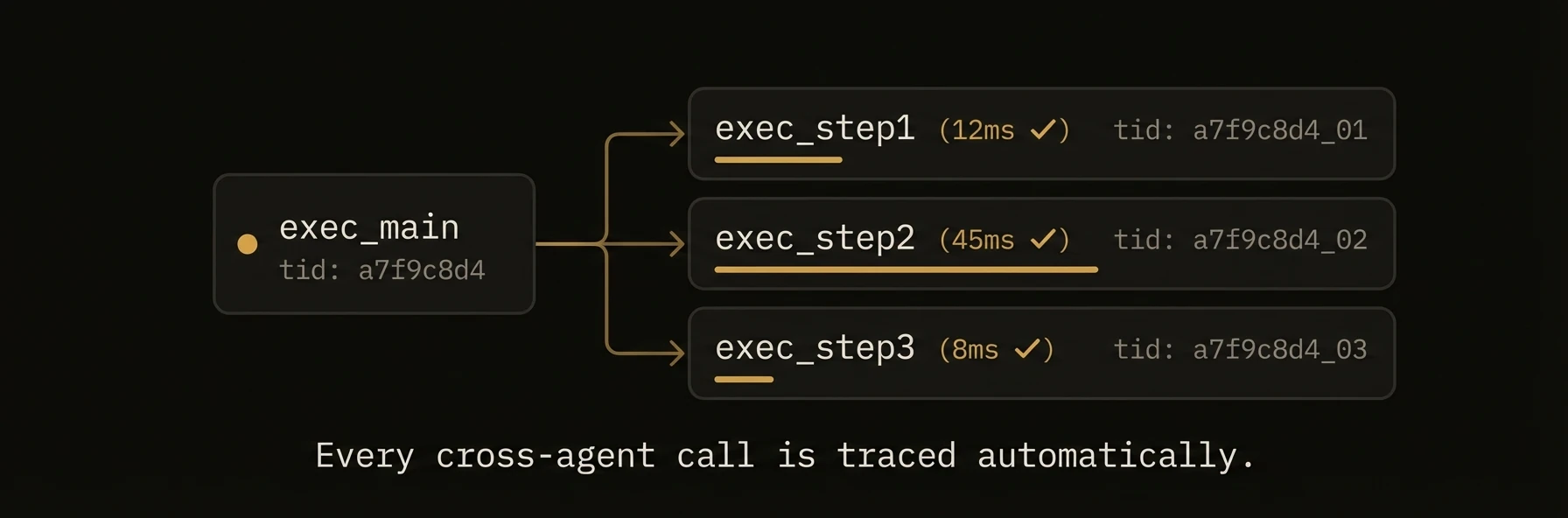

- AgentField attached execution IDs, workflow context, and an HTTP target automatically

Example target and result shape:

### What You Get

- **Automatic REST endpoints** generated from function signatures and type hints

- **Workflow tracking** with execution IDs, parent-child relationships, and DAG building

- **Schema generation** from type annotations (Python) or explicit schemas (TypeScript, Go)

- **Execution context** with run ID, session, memory access, and cross-agent call propagation

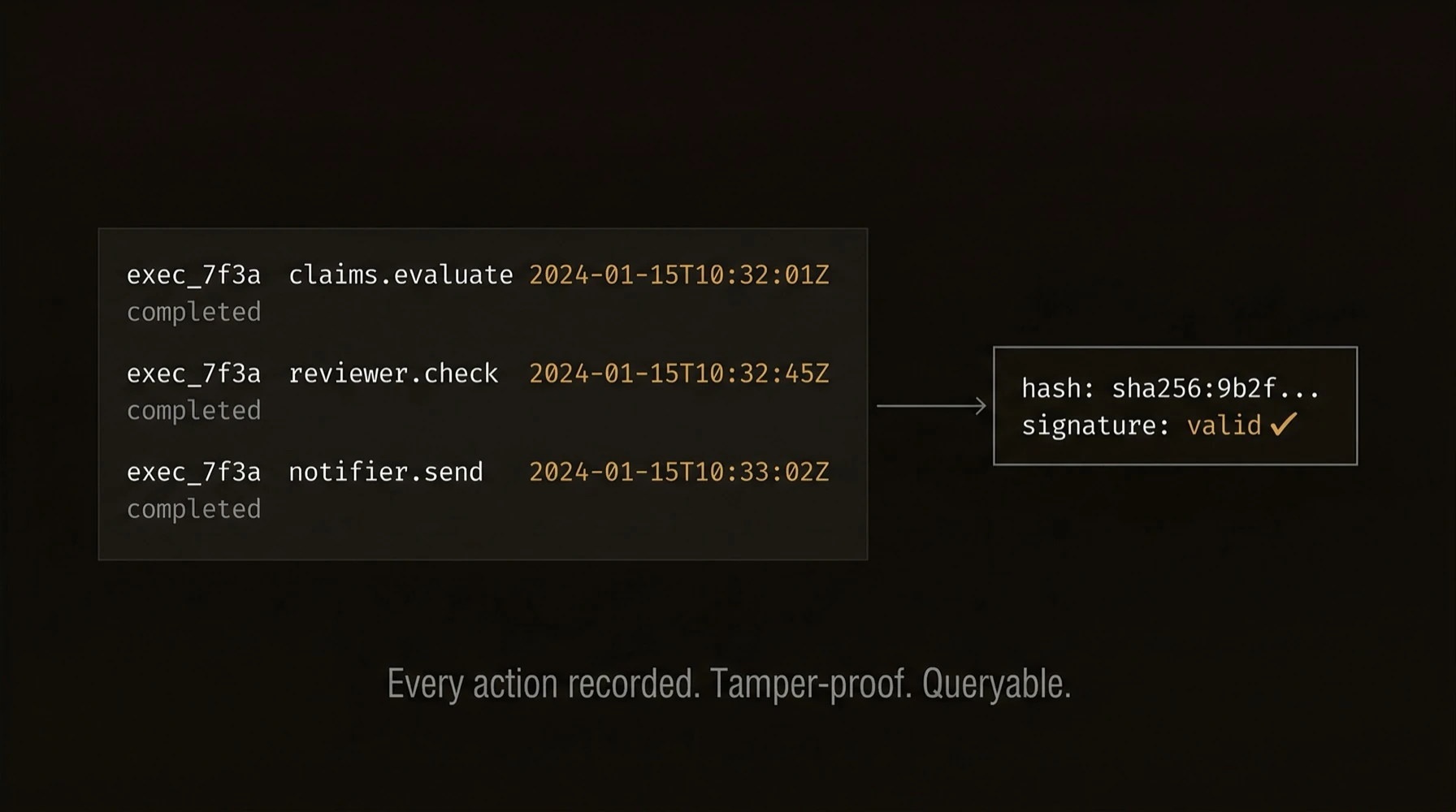

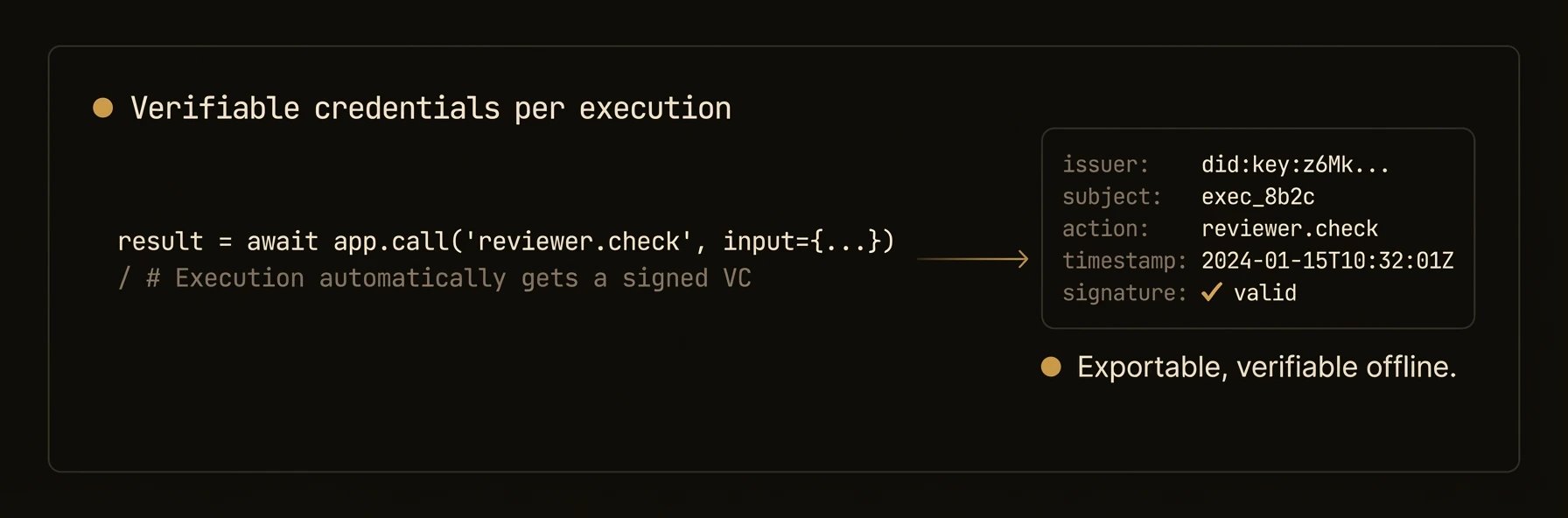

- **Verifiable credentials** for every execution when DID is enabled

- **Pydantic model conversion** (Python) for automatic input validation

### Patterns

### Execution context injection (Python)

When your reasoner function declares an `execution_context` parameter, the SDK automatically injects it:

### Python

### TypeScript

### Go

### Pydantic input validation (Python)

Reasoners automatically convert incoming JSON to Pydantic models when type hints are used:

### Python

### CLI-accessible reasoners (Go)

The Go SDK supports running reasoners directly from the command line:

### Go

### Registration Options

### Python `@app.reasoner()` decorator

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `path` | `str \| None` | `"/reasoners/{fn_name}"` | Custom API endpoint path |

| `name` | `str \| None` | function name | Explicit registration ID |

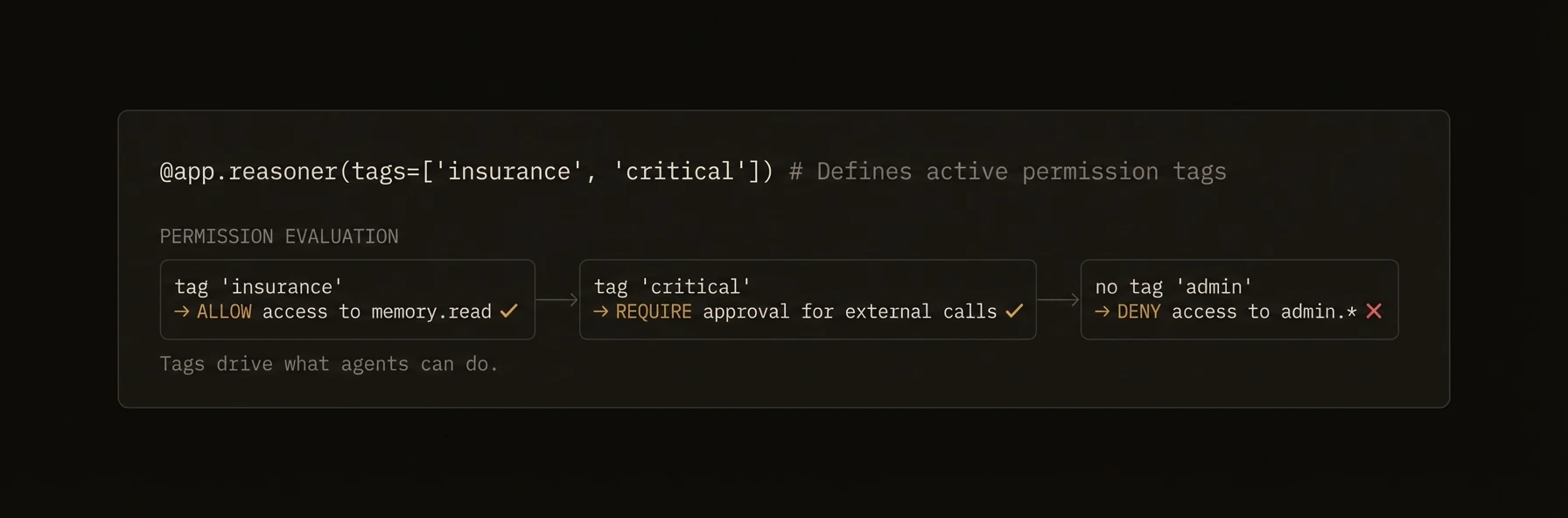

| `tags` | `list[str] \| None` | `None` | Organizational tags for discovery and policy |

| `vc_enabled` | `bool \| None` | `None` | Override agent-level VC generation |

| `require_realtime_validation` | `bool` | `False` | Force control-plane verification |

The standalone `@reasoner` decorator (from `agentfield.decorators`) adds `track_workflow` and `description` parameters and is used for module-level reasoners outside the agent decorator pattern.

### TypeScript `agent.reasoner()` options

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `tags` | `string[]` | `undefined` | Organizational tags |

| `description` | `string` | `undefined` | Human-readable description |

| `inputSchema` | `any` | `undefined` | JSON Schema for input validation |

| `outputSchema` | `any` | `undefined` | JSON Schema for output documentation |

| `trackWorkflow` | `boolean` | `undefined` | Enable workflow tracking |

| `requireRealtimeValidation` | `boolean` | `undefined` | Force control-plane verification |

### Go `RegisterReasoner()` options

| Option | Description |

|--------|-------------|

| `WithDescription(desc)` | Human-readable description for help and discovery |

| `WithReasonerTags(tags...)` | Tags for organization and tag-based authorization |

| `WithInputSchema(json.RawMessage)` | Override auto-generated input schema |

| `WithOutputSchema(json.RawMessage)` | Override default output schema |

| `WithVCEnabled(bool)` | Override agent-level VC generation |

| `WithCLI()` | Make this reasoner accessible from the CLI |

| `WithDefaultCLI()` | Set as the default CLI handler |

| `WithCLIFormatter(func)` | Custom output formatter for CLI mode |

| `WithRequireRealtimeValidation()` | Force control-plane verification |

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Register | `@app.reasoner()` | `agent.reasoner(name, handler, opts?)` | `a.RegisterReasoner(name, handler, opts...)` |

| Handler signature | `async def fn(arg: Type) -> Type` | `(ctx: ReasonerContext) => Promise` | `func(ctx, input map[string]any) (any, error)` |

| Access input | Function parameters | `ctx.input` | `input` map |

| Access AI | `await app.ai(...)` | `await ctx.ai(...)` | `a.AI(ctx, ...)` |

| Cross-agent call | `await app.call(target, input)` | `await ctx.call(target, input)` | `a.Call(ctx, target, input)` |

| Access memory | `app.memory.set(key, val)` | `ctx.memory.set(key, val)` | `a.Memory().Set(ctx, key, val)` |

| Get execution context | `execution_context` parameter | `ctx.executionId`, `ctx.runId` | `agent.ExecutionContextFrom(ctx)` |

---

## Routers

URL: https://agentfield.ai/docs/build/building-blocks/routers

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/routers

Last-Modified: 2026-03-24T16:54:10.000Z

Category: building-blocks

Difficulty: beginner

Keywords: router, namespace, module, organization, prefix, include_router

Summary: Organize reasoners and skills into namespaced modules with AgentRouter

AI-powered functions that turn LLM calls into typed, tracked, auditable API endpoints.

The real production problem is not “how do I call a model?” It is “which parts of this workflow need AI judgment, and which parts need deterministic control?” Reasoners are where that boundary lives.

They are not just LLM wrappers. A reasoner combines AI analysis with your routing, validation, escalation, and side effects, then runs that workflow with full execution context and auditability.

### Python

### TypeScript

### Go

---

**What just happened**

- AI produced a typed triage decision instead of free-form text

- Your code handled the escalation side effect deterministically

- The reasoner emitted an execution note for observability

- AgentField attached execution IDs, workflow context, and an HTTP target automatically

Example target and result shape:

### What You Get

- **Automatic REST endpoints** generated from function signatures and type hints

- **Workflow tracking** with execution IDs, parent-child relationships, and DAG building

- **Schema generation** from type annotations (Python) or explicit schemas (TypeScript, Go)

- **Execution context** with run ID, session, memory access, and cross-agent call propagation

- **Verifiable credentials** for every execution when DID is enabled

- **Pydantic model conversion** (Python) for automatic input validation

### Patterns

### Execution context injection (Python)

When your reasoner function declares an `execution_context` parameter, the SDK automatically injects it:

### Python

### TypeScript

### Go

### Pydantic input validation (Python)

Reasoners automatically convert incoming JSON to Pydantic models when type hints are used:

### Python

### CLI-accessible reasoners (Go)

The Go SDK supports running reasoners directly from the command line:

### Go

### Registration Options

### Python `@app.reasoner()` decorator

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `path` | `str \| None` | `"/reasoners/{fn_name}"` | Custom API endpoint path |

| `name` | `str \| None` | function name | Explicit registration ID |

| `tags` | `list[str] \| None` | `None` | Organizational tags for discovery and policy |

| `vc_enabled` | `bool \| None` | `None` | Override agent-level VC generation |

| `require_realtime_validation` | `bool` | `False` | Force control-plane verification |

The standalone `@reasoner` decorator (from `agentfield.decorators`) adds `track_workflow` and `description` parameters and is used for module-level reasoners outside the agent decorator pattern.

### TypeScript `agent.reasoner()` options

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `tags` | `string[]` | `undefined` | Organizational tags |

| `description` | `string` | `undefined` | Human-readable description |

| `inputSchema` | `any` | `undefined` | JSON Schema for input validation |

| `outputSchema` | `any` | `undefined` | JSON Schema for output documentation |

| `trackWorkflow` | `boolean` | `undefined` | Enable workflow tracking |

| `requireRealtimeValidation` | `boolean` | `undefined` | Force control-plane verification |

### Go `RegisterReasoner()` options

| Option | Description |

|--------|-------------|

| `WithDescription(desc)` | Human-readable description for help and discovery |

| `WithReasonerTags(tags...)` | Tags for organization and tag-based authorization |

| `WithInputSchema(json.RawMessage)` | Override auto-generated input schema |

| `WithOutputSchema(json.RawMessage)` | Override default output schema |

| `WithVCEnabled(bool)` | Override agent-level VC generation |

| `WithCLI()` | Make this reasoner accessible from the CLI |

| `WithDefaultCLI()` | Set as the default CLI handler |

| `WithCLIFormatter(func)` | Custom output formatter for CLI mode |

| `WithRequireRealtimeValidation()` | Force control-plane verification |

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Register | `@app.reasoner()` | `agent.reasoner(name, handler, opts?)` | `a.RegisterReasoner(name, handler, opts...)` |

| Handler signature | `async def fn(arg: Type) -> Type` | `(ctx: ReasonerContext) => Promise` | `func(ctx, input map[string]any) (any, error)` |

| Access input | Function parameters | `ctx.input` | `input` map |

| Access AI | `await app.ai(...)` | `await ctx.ai(...)` | `a.AI(ctx, ...)` |

| Cross-agent call | `await app.call(target, input)` | `await ctx.call(target, input)` | `a.Call(ctx, target, input)` |

| Access memory | `app.memory.set(key, val)` | `ctx.memory.set(key, val)` | `a.Memory().Set(ctx, key, val)` |

| Get execution context | `execution_context` parameter | `ctx.executionId`, `ctx.runId` | `agent.ExecutionContextFrom(ctx)` |

---

## Routers

URL: https://agentfield.ai/docs/build/building-blocks/routers

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/routers

Last-Modified: 2026-03-24T16:54:10.000Z

Category: building-blocks

Difficulty: beginner

Keywords: router, namespace, module, organization, prefix, include_router

Summary: Organize reasoners and skills into namespaced modules with AgentRouter

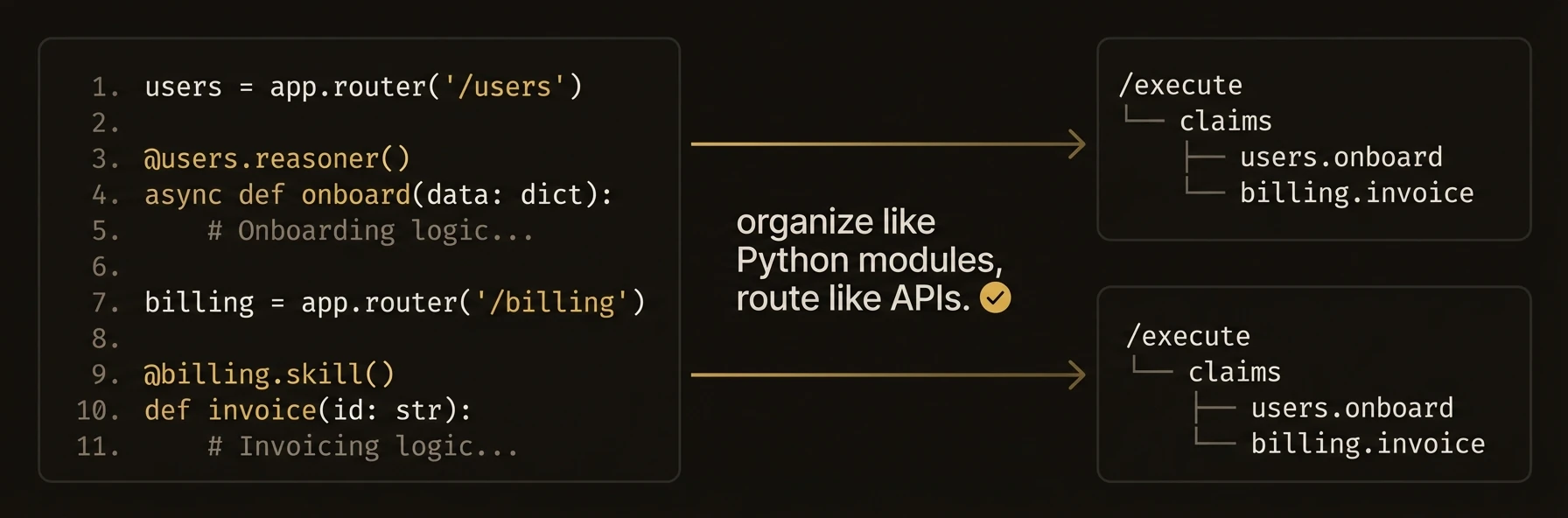

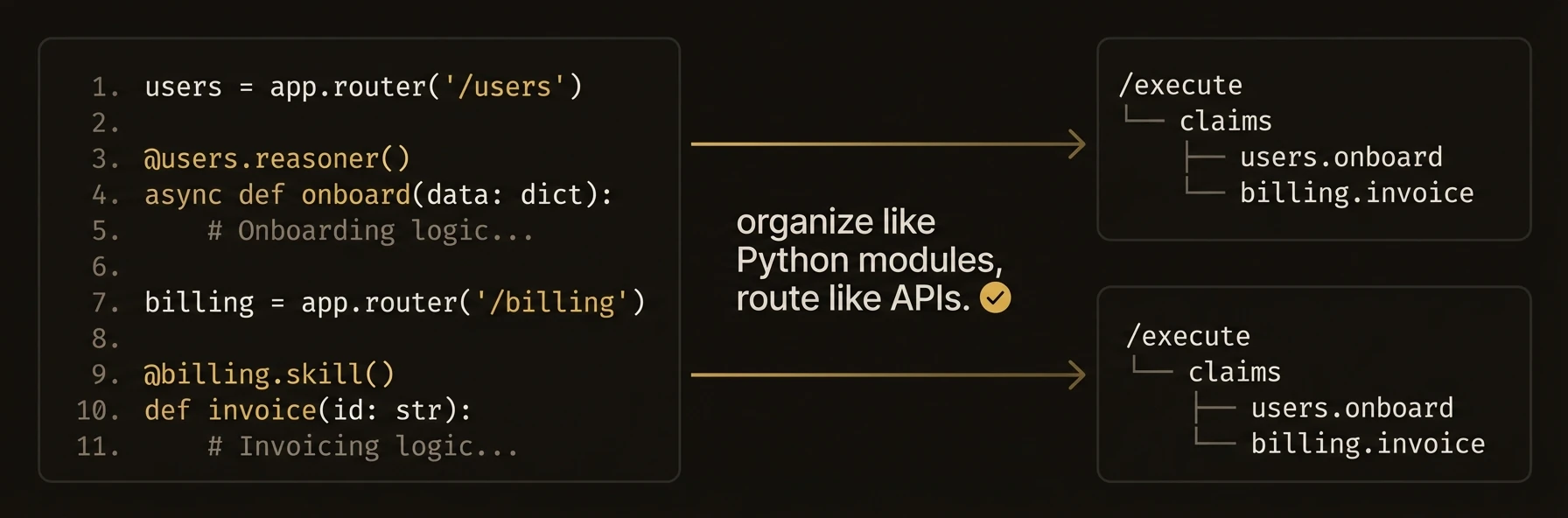

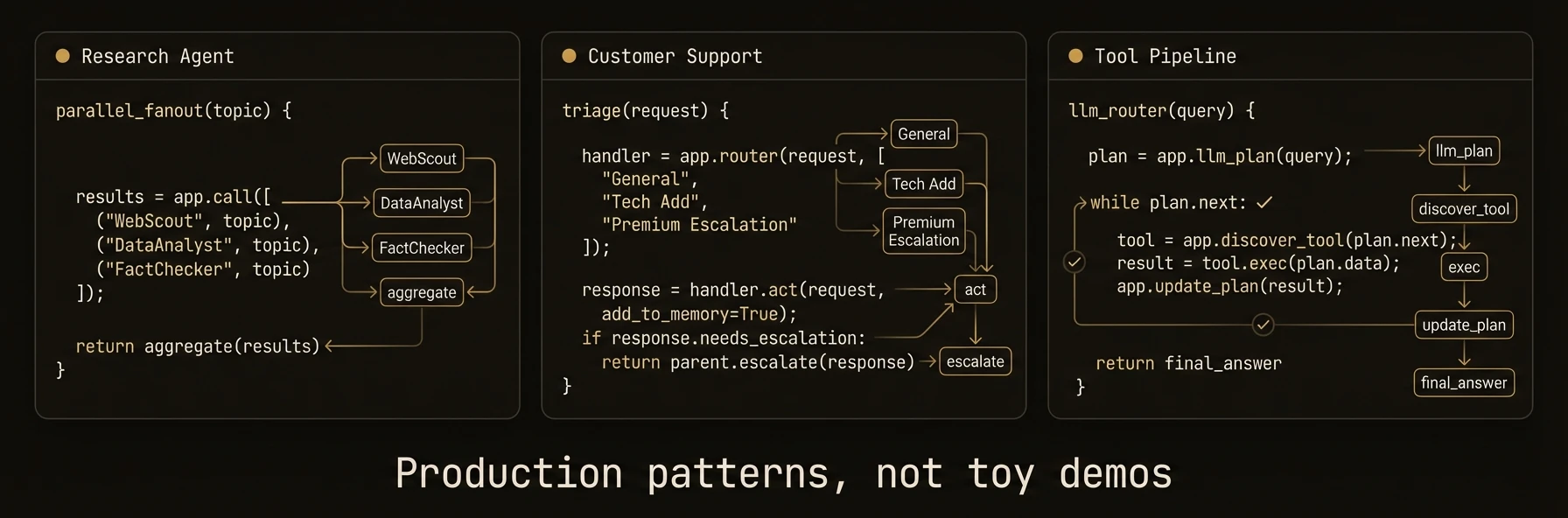

Organize reasoners and skills into reusable, namespaced modules -- like FastAPI's `APIRouter` for agents.

Routers matter once an agent grows beyond a handful of functions. They do not just organize code. They shape the public callable surface of your agent by turning prefixes into namespaced function IDs.

### Python

### TypeScript

---

**What just happened**

- Two logical domains became one agent with a cleaner public API

- Router prefixes became part of the callable function identity

- The same router pattern can be reused across multiple agents or packages

Concrete target examples:

### Patterns

### Multi-module agent

### Python

### TypeScript

### Shared utility router

### Python

### Go alternative: naming conventions

Since Go does not have AgentRouter, use consistent naming and tags:

### Go

### SDK Availability

| SDK | AgentRouter | Alternative |

|-----|-------------|-------------|

| Python | Yes | -- |

| TypeScript | Yes | -- |

| Go | **Not available** | Use naming conventions and tags |

The Go SDK does not have an AgentRouter abstraction. Organize Go agent code using consistent naming conventions (e.g., `math_add`, `math_multiply`) and tags.

### Constructor

### Python `AgentRouter`

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `prefix` | `str` | `""` | Path prefix prepended to all registered function paths |

| `tags` | `list[str] \| None` | `None` | Tags inherited by all reasoners and skills in this router |

### TypeScript `AgentRouter`

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `prefix` | `string` | `undefined` | Name prefix prepended to all registered functions |

| `tags` | `string[]` | `undefined` | Tags for the router (note: tag inheritance to child reasoners/skills only works in Python, not TypeScript) |

### SDK Reference

| Operation | Python | TypeScript |

|-----------|--------|------------|

| Create router | `AgentRouter(prefix="x")` | `new AgentRouter({ prefix: "x" })` |

| Register reasoner | `@router.reasoner()` | `router.reasoner(name, handler, opts?)` |

| Register skill | `@router.skill()` | `router.skill(name, handler, opts?)` |

| Attach to agent | `app.include_router(router)` | `agent.includeRouter(router)` |

| Access agent methods | `router.ai()`, `router.call()` | via `ctx` parameter |

| Access underlying agent | `router.app` | N/A |

---

## Skills

URL: https://agentfield.ai/docs/build/building-blocks/skills

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/skills

Last-Modified: 2026-03-24T16:54:10.000Z

Category: building-blocks

Difficulty: beginner

Keywords: skill, deterministic, function, business logic, integration, data processing

Summary: Deterministic functions for business logic, integrations, and data processing

Organize reasoners and skills into reusable, namespaced modules -- like FastAPI's `APIRouter` for agents.

Routers matter once an agent grows beyond a handful of functions. They do not just organize code. They shape the public callable surface of your agent by turning prefixes into namespaced function IDs.

### Python

### TypeScript

---

**What just happened**

- Two logical domains became one agent with a cleaner public API

- Router prefixes became part of the callable function identity

- The same router pattern can be reused across multiple agents or packages

Concrete target examples:

### Patterns

### Multi-module agent

### Python

### TypeScript

### Shared utility router

### Python

### Go alternative: naming conventions

Since Go does not have AgentRouter, use consistent naming and tags:

### Go

### SDK Availability

| SDK | AgentRouter | Alternative |

|-----|-------------|-------------|

| Python | Yes | -- |

| TypeScript | Yes | -- |

| Go | **Not available** | Use naming conventions and tags |

The Go SDK does not have an AgentRouter abstraction. Organize Go agent code using consistent naming conventions (e.g., `math_add`, `math_multiply`) and tags.

### Constructor

### Python `AgentRouter`

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `prefix` | `str` | `""` | Path prefix prepended to all registered function paths |

| `tags` | `list[str] \| None` | `None` | Tags inherited by all reasoners and skills in this router |

### TypeScript `AgentRouter`

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `prefix` | `string` | `undefined` | Name prefix prepended to all registered functions |

| `tags` | `string[]` | `undefined` | Tags for the router (note: tag inheritance to child reasoners/skills only works in Python, not TypeScript) |

### SDK Reference

| Operation | Python | TypeScript |

|-----------|--------|------------|

| Create router | `AgentRouter(prefix="x")` | `new AgentRouter({ prefix: "x" })` |

| Register reasoner | `@router.reasoner()` | `router.reasoner(name, handler, opts?)` |

| Register skill | `@router.skill()` | `router.skill(name, handler, opts?)` |

| Attach to agent | `app.include_router(router)` | `agent.includeRouter(router)` |

| Access agent methods | `router.ai()`, `router.call()` | via `ctx` parameter |

| Access underlying agent | `router.app` | N/A |

---

## Skills

URL: https://agentfield.ai/docs/build/building-blocks/skills

Markdown: https://agentfield.ai/llm/docs/build/building-blocks/skills

Last-Modified: 2026-03-24T16:54:10.000Z

Category: building-blocks

Difficulty: beginner

Keywords: skill, deterministic, function, business logic, integration, data processing

Summary: Deterministic functions for business logic, integrations, and data processing

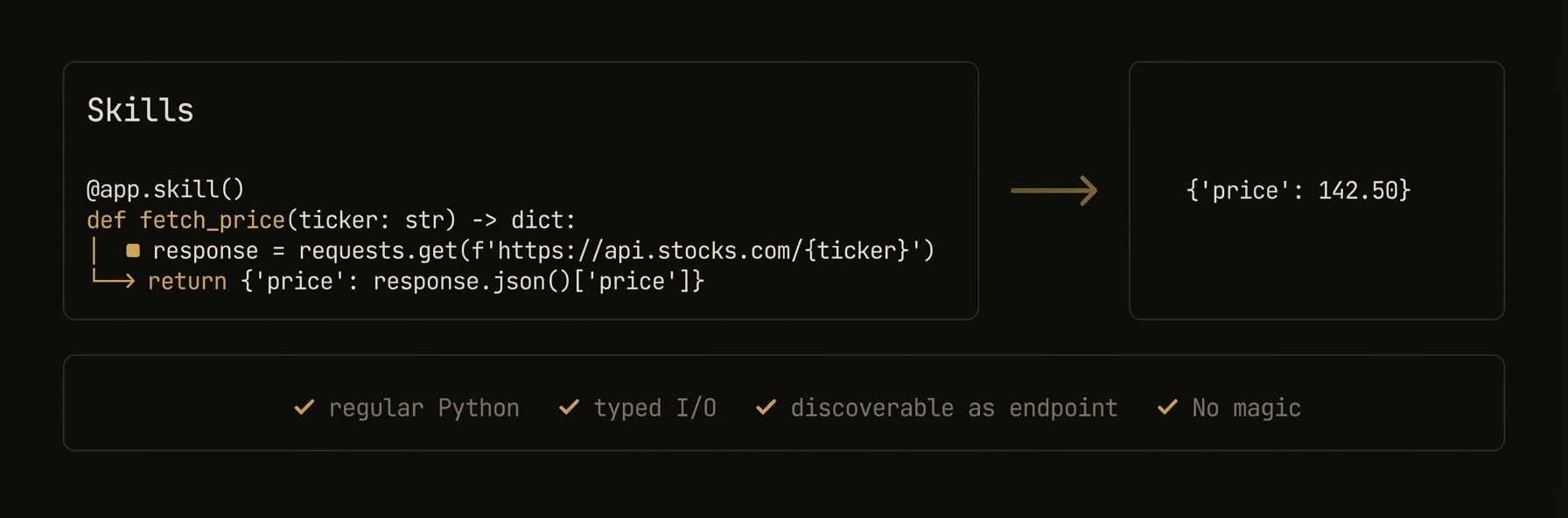

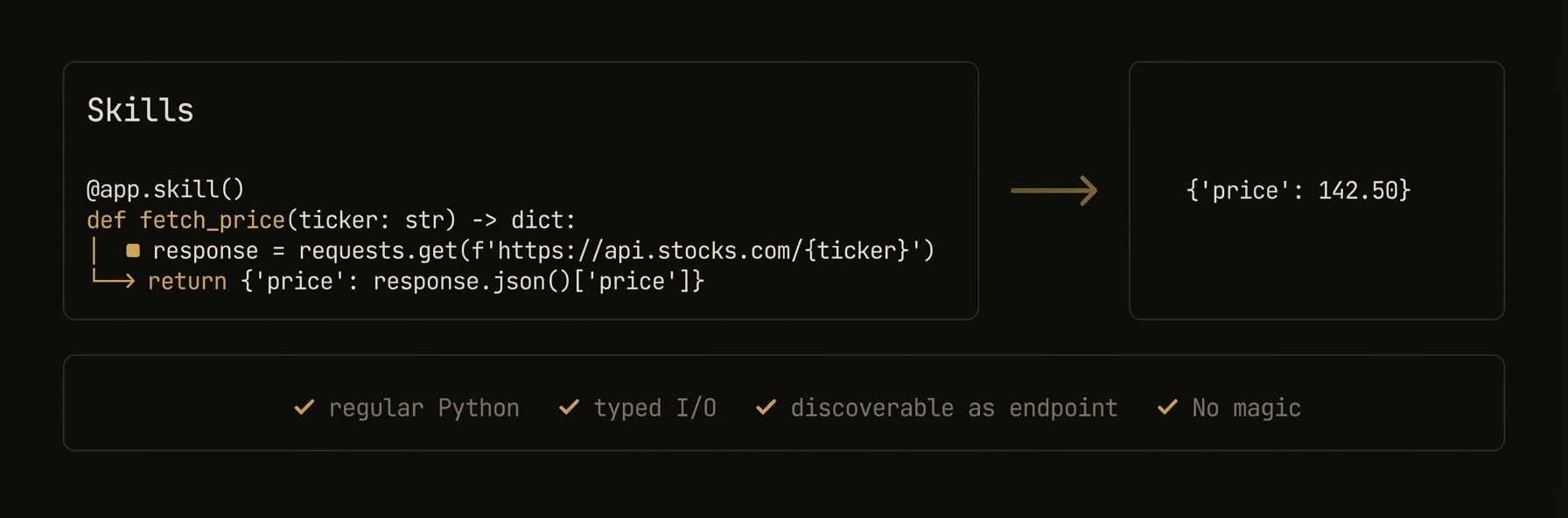

Deterministic functions for business logic that need the same infrastructure as reasoners -- without the AI.

This separation matters in production. When something breaks, you want to know whether the failure came from AI judgment or deterministic code. Skills are where you put systems-of-record access, calculations, API calls, and repeatable side effects.

### Python

```python

from agentfield import Agent

from pydantic import BaseModel

app = Agent(node_id="inventory-service", version="1.0.0")

class StockQuery(BaseModel):

sku: str

warehouse: str = "us-east"

@app.skill(tags=["database", "inventory"])

async def check_stock(query: StockQuery) -> dict:

"""Fetch deterministic inventory state."""

row = await db.execute(

"SELECT sku, qty, reserved FROM stock WHERE sku = $1 AND warehouse = $2",

query.sku, query.warehouse,

)

if not row:

return {"sku": query.sku, "available": 0, "status": "not_found"}

available = row["qty"] - row["reserved"]

return {"sku": query.sku, "available": available, "status": "in_stock" if available > 0 else "out_of_stock"}

@app.skill(tags=["integrations", "shipping", "policy"])

async def fulfillable_quote(sku: str, dest: str, weight_kg: float) -> dict:

stock = await check_stock(StockQuery(sku=sku))

if stock["available"] <= 0:

return {"sku": sku, "fulfillable": False, "reason": "out_of_stock"}

rates = await fedex_client.rate_quote(origin="us-east", dest=dest, weight=weight_kg)

return {"sku": sku, "fulfillable": True, "rates": rates, "currency": "USD"}

app.run()

# → POST /skills/check_stock — auto-generated REST endpoint with input validation

# → POST /skills/fulfillable_quote — discoverable by any agent in the fleet

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

const agent = new Agent({ nodeId: 'inventory-service', version: '1.0.0' });

agent.skill('check_stock', async (ctx) => {

const { sku, warehouse = 'us-east' } = ctx.input;

const row = await db.query('SELECT sku, qty, reserved FROM stock WHERE sku = $1 AND warehouse = $2', [sku, warehouse]);

if (!row) return { sku, available: 0, status: 'not_found' };

const available = row.qty - row.reserved;

return { sku, available, status: available > 0 ? 'in_stock' : 'out_of_stock' };

}, {

tags: ['database', 'inventory'],

description: 'Query real-time inventory by SKU and warehouse',

});

agent.skill('fulfillableQuote', async (ctx) => {

const stock = await agent.call('inventory-service.check_stock', {

sku: ctx.input.sku,

warehouse: 'us-east',

});

if (stock.available <= 0) {

return { sku: ctx.input.sku, fulfillable: false, reason: 'out_of_stock' };

}

const rates = await fedexClient.rateQuote({

origin: 'us-east',

dest: ctx.input.dest,

weight: ctx.input.weightKg,

});

return { sku: ctx.input.sku, fulfillable: true, rates, currency: 'USD' };

}, {

tags: ['integrations', 'shipping', 'policy'],

description: 'Check fulfillment viability and return shipping options',

});

agent.serve();

// → POST /skills/check_stock — auto-generated REST endpoint with input validation

// → POST /skills/fulfillableQuote — discoverable by any agent in the fleet

```

### Go

```go

// The Go SDK does not have a separate skill registration method.

// Use RegisterReasoner for all functions, whether AI-powered or deterministic.

// This registers /reasoners/check_stock, not /skills/check_stock.

a.RegisterReasoner("check_stock", func(ctx context.Context, input map[string]any) (any, error) {

sku, _ := input["sku"].(string)

warehouse, _ := input["warehouse"].(string)

if warehouse == "" {

warehouse = "us-east"

}

row, err := db.QueryRow(ctx, "SELECT qty, reserved FROM stock WHERE sku = $1 AND warehouse = $2", sku, warehouse)

if err != nil {

return map[string]any{"sku": sku, "available": 0, "status": "not_found"}, nil

}

available := row.Qty - row.Reserved

status := "out_of_stock"

if available > 0 {

status = "in_stock"

}

return map[string]any{"sku": sku, "available": available, "status": status}, nil

},

agent.WithDescription("Query real-time inventory by SKU and warehouse"),

agent.WithReasonerTags("database", "inventory"),

)

```

---

**What just happened**

- Skills handled deterministic state and policy logic without any model call

- The second skill composed the first skill into a production-shaped workflow

- Both skills inherited the same endpoint generation, discoverability, and execution tracking as reasoners

- In Go, the same deterministic pattern is registered with `RegisterReasoner` and exposed under `/reasoners/{name}` instead of `/skills/{name}`

Example proof:

```text

Python/TypeScript:

POST /skills/check_stock

POST /skills/fulfillable_quote

discoverable target: inventory-service.fulfillable_quote

Go:

POST /reasoners/check_stock

```

### Skills vs Reasoners

| Aspect | Reasoner | Skill |

|--------|----------|-------|

| **Purpose** | AI-powered inference and generation | Deterministic business logic |

| **LLM calls** | Typically uses `app.ai()` or `app.harness()` | Typically no LLM calls |

| **Output** | May vary across runs | Same input always produces same output |

| **Use cases** | Classification, generation, analysis | API calls, database ops, calculations, formatting |

| **Endpoint prefix** | `/reasoners/{name}` | `/skills/{name}` |

Both share the same execution infrastructure: workflow tracking, execution context, verifiable credentials, and cross-agent communication.

### Patterns

### Database integration skill

Skills are ideal for wrapping database queries with validation and consistent return shapes:

### Python

```python

from pydantic import BaseModel

from typing import Optional

class UserQuery(BaseModel):

user_id: str

fields: list[str] = ["name", "email"]

@app.skill(tags=["database", "users"])

async def get_user(query: UserQuery) -> dict:

# Skills can be async for I/O operations

user = await db.users.find_one({"_id": query.user_id})

if not user:

return {"error": "User not found", "user_id": query.user_id}

return {k: user.get(k) for k in query.fields if k in user}

```

### TypeScript

```typescript

agent.skill('get_user', async (ctx) => {

const { user_id, fields = ['name', 'email'] } = ctx.input;

const user = await db.users.findOne({ _id: user_id });

if (!user) {

return { error: 'User not found', user_id };

}

return Object.fromEntries(fields.filter((f) => f in user).map((f) => [f, user[f]]));

}, {

tags: ['database', 'users'],

description: 'Fetch user fields from the database',

});

```

### MCP tool auto-registration (Python)

The Python SDK can automatically discover MCP servers and register their tools as skills:

### Python

```python

app = Agent(

node_id="mcp-bridge",

enable_mcp=True,

)

# MCP tools are automatically discovered and registered as skills

# with the naming pattern: {server_alias}_{tool_name}

# Each tool gets a /skills/{skill_name} endpoint

```

### Combining skills and reasoners

A common pattern is using skills for data retrieval and reasoners for AI analysis:

### Python

```python

@app.skill(tags=["data"])

async def fetch_metrics(service: str, window: str = "24h") -> dict:

metrics = await monitoring_api.query(service, window)

return {"service": service, "metrics": metrics}

@app.reasoner(tags=["analysis"])

async def diagnose(service: str) -> dict:

# Skill handles data retrieval (deterministic)

metrics = await fetch_metrics(service, window="1h")

# Reasoner handles AI analysis (non-deterministic)

diagnosis = await app.ai(

system="You are an SRE diagnosing service issues.",

user=f"Analyze metrics for {service}: {metrics}",

)

return {"service": service, "diagnosis": diagnosis}

```

### TypeScript

```typescript

agent.skill('fetch_metrics', async (ctx) => {

const { service, window = '24h' } = ctx.input;

const metrics = await monitoringApi.query(service, window);

return { service, metrics };

}, { tags: ['data'] });

agent.reasoner('diagnose', async (ctx) => {

const { service } = ctx.input;

const metrics = await ctx.call('mcp-bridge.fetch_metrics', { service, window: '1h' });

const diagnosis = await ctx.ai(

`Analyze metrics for ${service}: ${JSON.stringify(metrics)}`,

{ system: 'You are an SRE diagnosing service issues.' },

);

return { service, diagnosis };

}, { tags: ['analysis'] });

```

### Registration Options

### Python `@app.skill()` decorator

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `tags` | `list[str] \| None` | `None` | Organizational tags for discovery and policy |

| `path` | `str \| None` | `"/skills/{fn_name}"` | Custom API endpoint path |

| `name` | `str \| None` | function name | Explicit registration ID |

| `vc_enabled` | `bool \| None` | `None` | Override agent-level VC generation |

| `require_realtime_validation` | `bool` | `False` | Force control-plane verification |

### TypeScript `agent.skill()` options

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `tags` | `string[]` | `undefined` | Organizational tags |

| `description` | `string` | `undefined` | Human-readable description |

| `inputSchema` | `any` | `undefined` | JSON Schema for input validation |

| `outputSchema` | `any` | `undefined` | JSON Schema for output documentation |

| `requireRealtimeValidation` | `boolean` | `undefined` | Force control-plane verification |

### Go

The Go SDK does not distinguish between skills and reasoners at the registration level. All functions are registered with `RegisterReasoner`. Use tags to distinguish intent:

```go

a.RegisterReasoner("my_skill", handler,

agent.WithReasonerTags("skill", "deterministic"),

agent.WithDescription("A deterministic operation"),

)

```

### SDK Reference

| Operation | Python | TypeScript | Go |

|-----------|--------|------------|-----|

| Register | `@app.skill()` | `agent.skill(name, handler, opts?)` | `a.RegisterReasoner(name, handler)` |

| Handler signature | `def fn(arg: Type) -> Type` | `(ctx: SkillContext) => T \| Promise` | `func(ctx, input) (any, error)` |

| Sync support | Yes (sync or async) | Yes (sync or async) | Sync only |

| Access input | Function parameters | `ctx.input` | `input` map |

| Access memory | `app.memory.set(key, val)` | `ctx.memory.set(key, val)` | `a.Memory().Set(ctx, key, val)` |

| Endpoint prefix | `/skills/` | `/skills/` | `/reasoners/` |

---

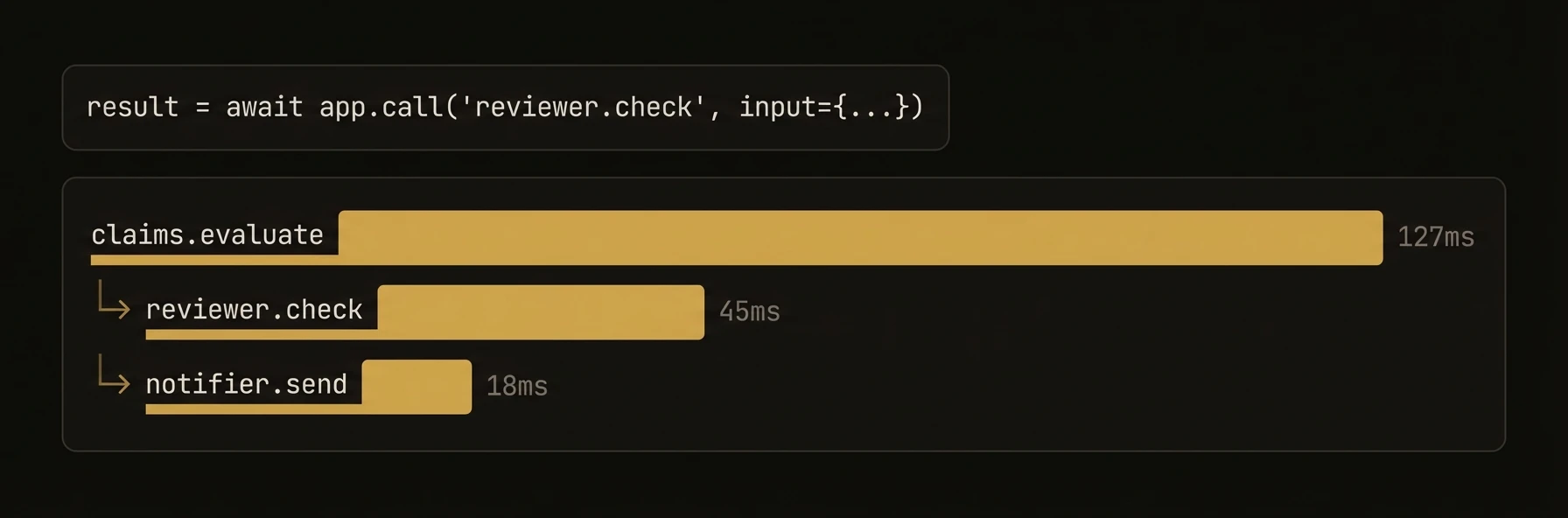

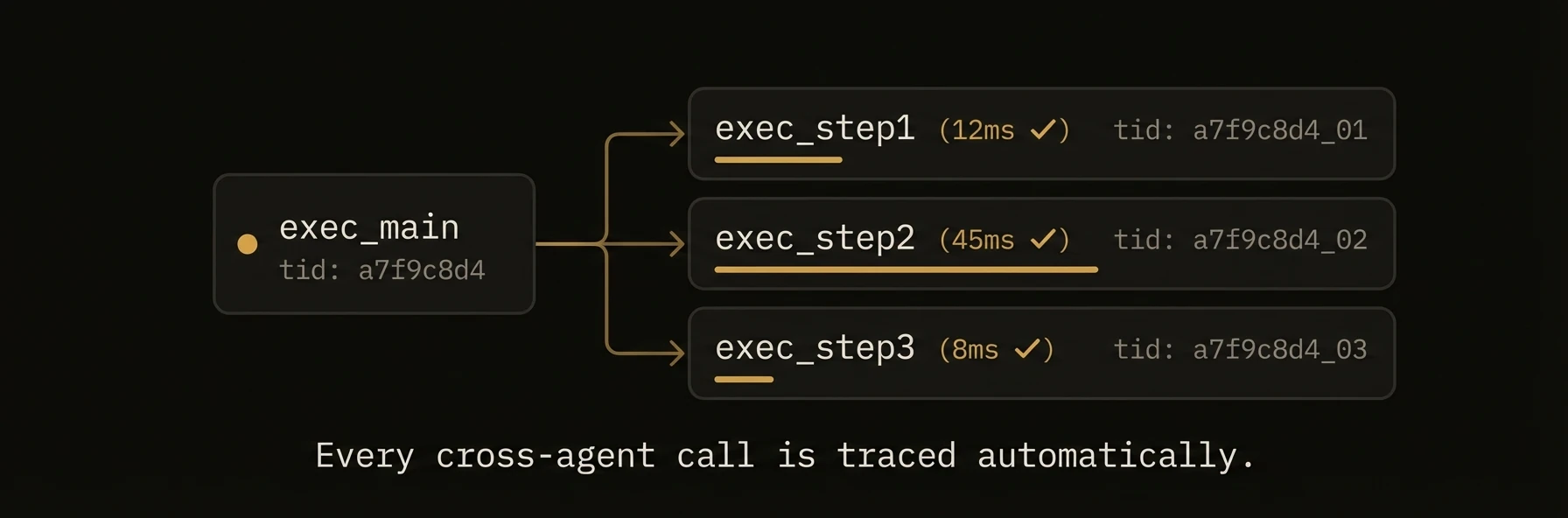

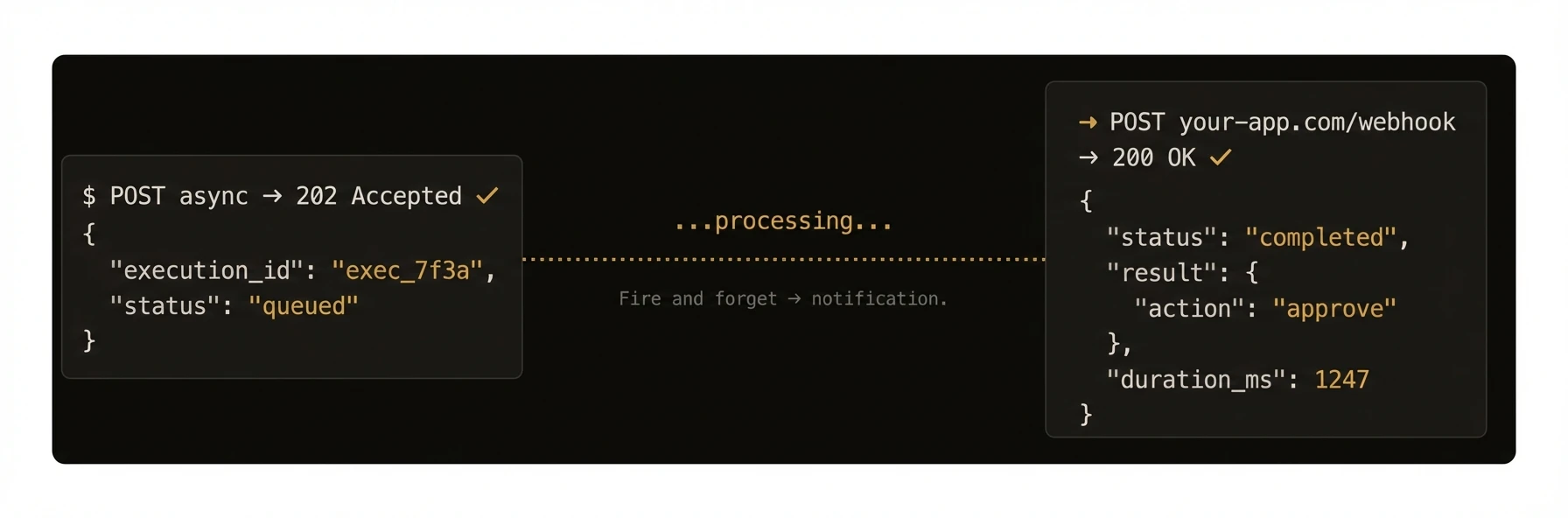

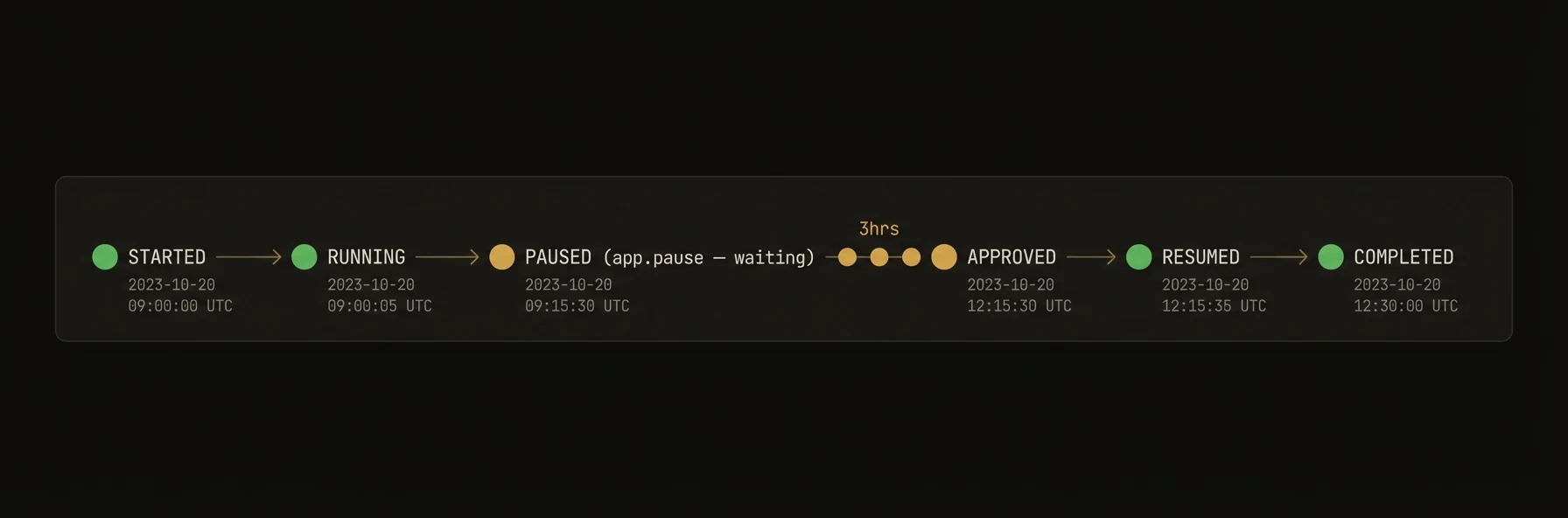

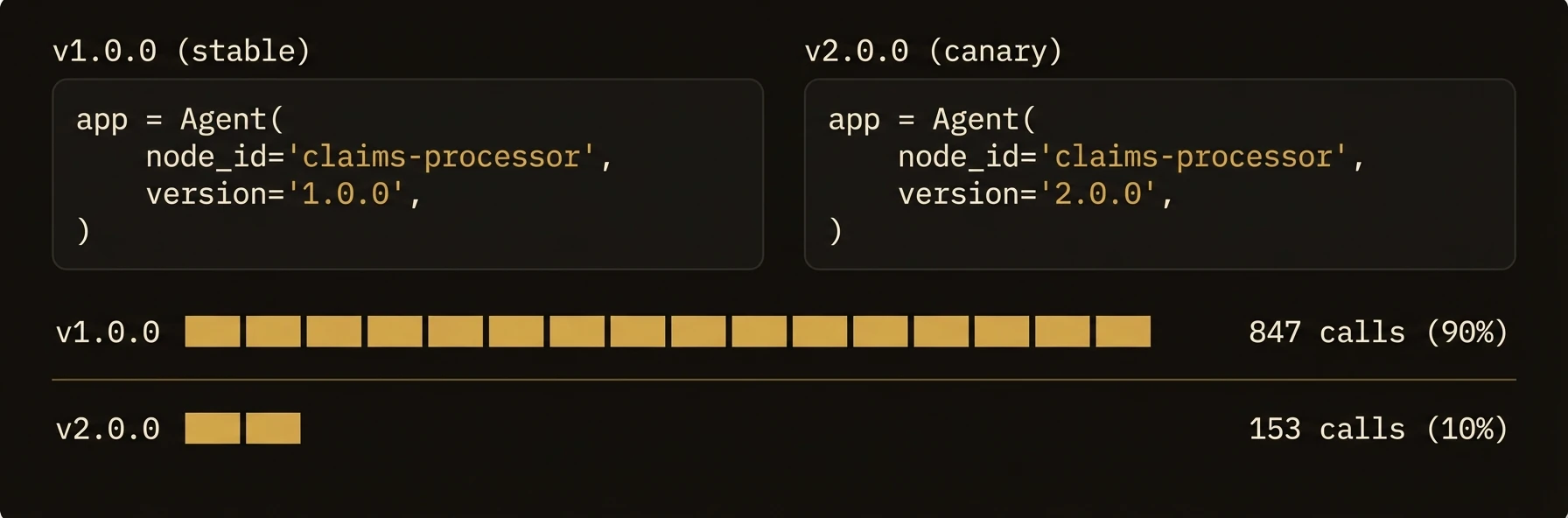

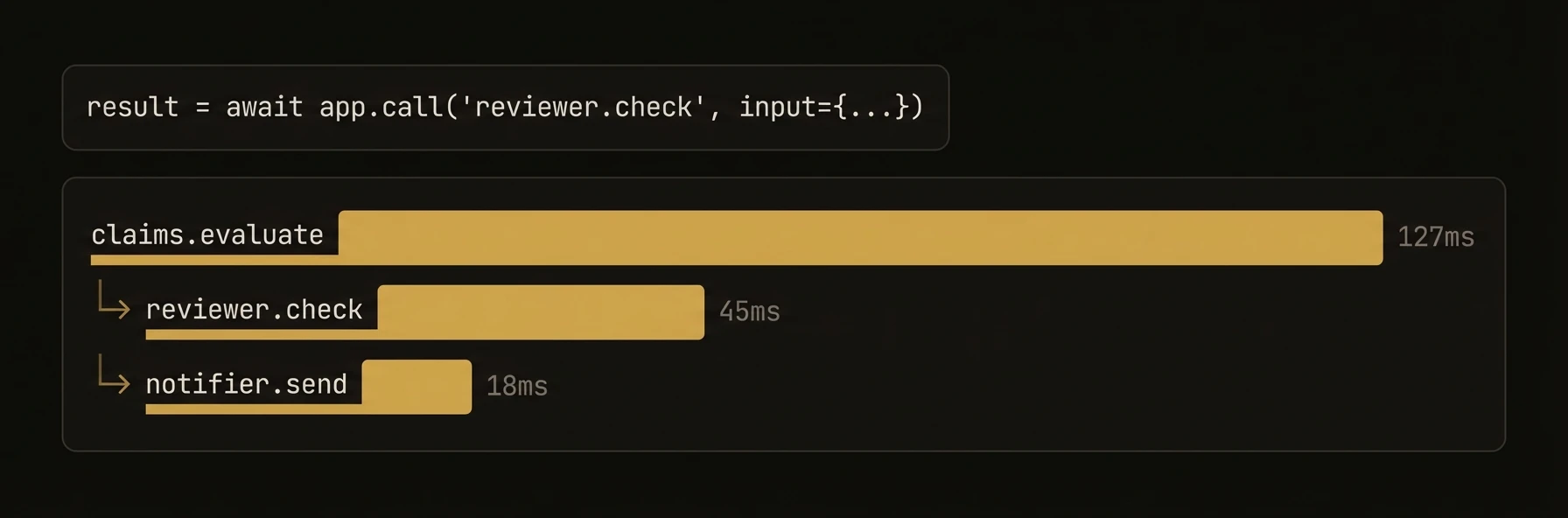

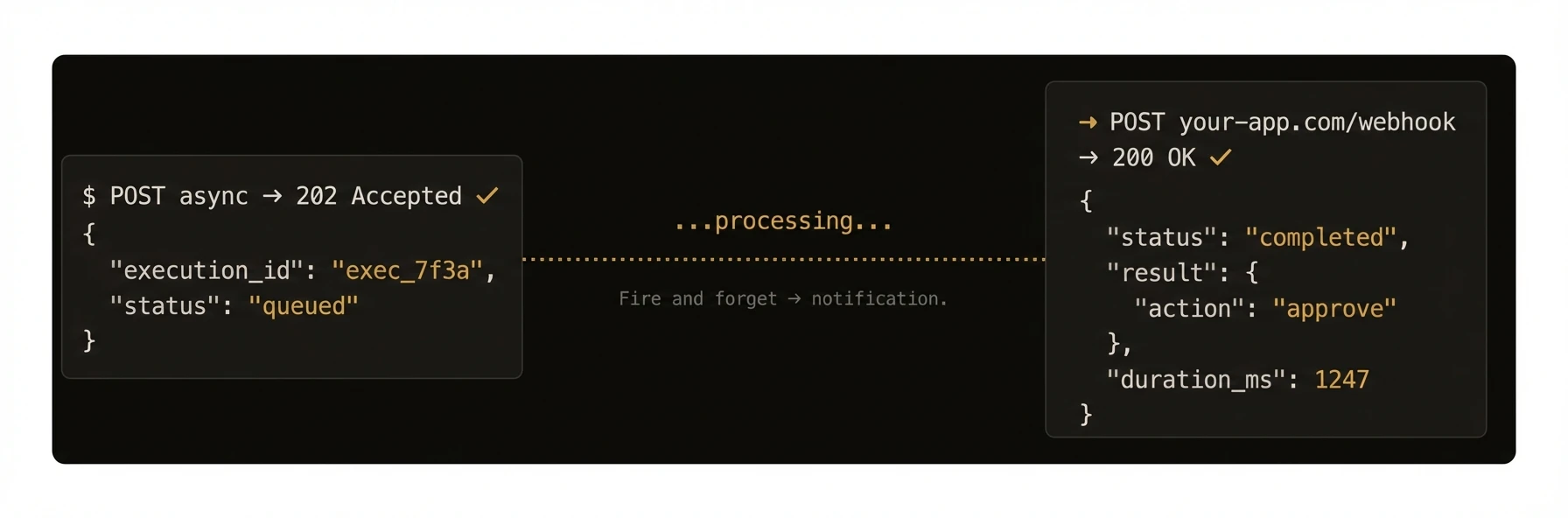

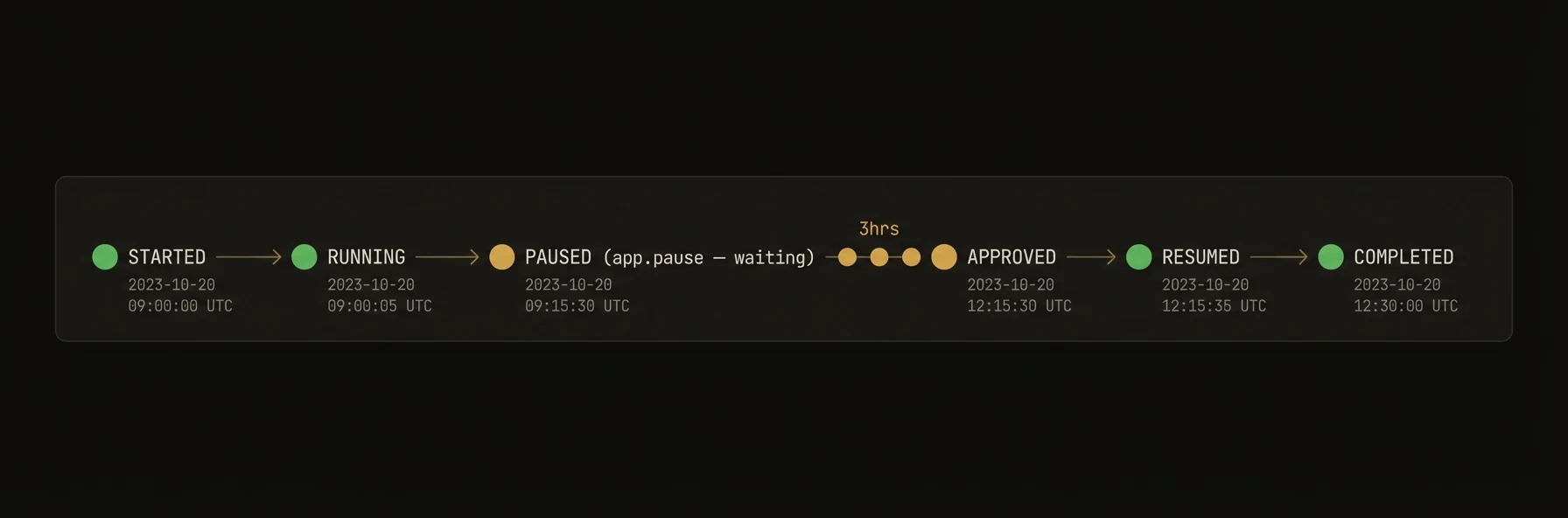

## Cross-Agent Calls

URL: https://agentfield.ai/docs/build/coordination/cross-agent-calls

Markdown: https://agentfield.ai/llm/docs/build/coordination/cross-agent-calls

Last-Modified: 2026-03-24T17:04:50.000Z

Category: coordination

Difficulty: intermediate

Keywords: call, cross-agent, rpc, execution, workflow, context-propagation

Summary: Call reasoners and skills on other agents through the control plane execution gateway.

Deterministic functions for business logic that need the same infrastructure as reasoners -- without the AI.

This separation matters in production. When something breaks, you want to know whether the failure came from AI judgment or deterministic code. Skills are where you put systems-of-record access, calculations, API calls, and repeatable side effects.

### Python

```python

from agentfield import Agent

from pydantic import BaseModel

app = Agent(node_id="inventory-service", version="1.0.0")

class StockQuery(BaseModel):

sku: str

warehouse: str = "us-east"

@app.skill(tags=["database", "inventory"])

async def check_stock(query: StockQuery) -> dict:

"""Fetch deterministic inventory state."""

row = await db.execute(

"SELECT sku, qty, reserved FROM stock WHERE sku = $1 AND warehouse = $2",

query.sku, query.warehouse,

)

if not row:

return {"sku": query.sku, "available": 0, "status": "not_found"}

available = row["qty"] - row["reserved"]

return {"sku": query.sku, "available": available, "status": "in_stock" if available > 0 else "out_of_stock"}

@app.skill(tags=["integrations", "shipping", "policy"])

async def fulfillable_quote(sku: str, dest: str, weight_kg: float) -> dict:

stock = await check_stock(StockQuery(sku=sku))

if stock["available"] <= 0:

return {"sku": sku, "fulfillable": False, "reason": "out_of_stock"}

rates = await fedex_client.rate_quote(origin="us-east", dest=dest, weight=weight_kg)

return {"sku": sku, "fulfillable": True, "rates": rates, "currency": "USD"}

app.run()

# → POST /skills/check_stock — auto-generated REST endpoint with input validation

# → POST /skills/fulfillable_quote — discoverable by any agent in the fleet

```

### TypeScript

```typescript

import { Agent } from '@agentfield/sdk';

const agent = new Agent({ nodeId: 'inventory-service', version: '1.0.0' });

agent.skill('check_stock', async (ctx) => {

const { sku, warehouse = 'us-east' } = ctx.input;

const row = await db.query('SELECT sku, qty, reserved FROM stock WHERE sku = $1 AND warehouse = $2', [sku, warehouse]);

if (!row) return { sku, available: 0, status: 'not_found' };

const available = row.qty - row.reserved;

return { sku, available, status: available > 0 ? 'in_stock' : 'out_of_stock' };

}, {

tags: ['database', 'inventory'],

description: 'Query real-time inventory by SKU and warehouse',

});

agent.skill('fulfillableQuote', async (ctx) => {

const stock = await agent.call('inventory-service.check_stock', {

sku: ctx.input.sku,

warehouse: 'us-east',

});

if (stock.available <= 0) {

return { sku: ctx.input.sku, fulfillable: false, reason: 'out_of_stock' };

}

const rates = await fedexClient.rateQuote({

origin: 'us-east',

dest: ctx.input.dest,

weight: ctx.input.weightKg,

});

return { sku: ctx.input.sku, fulfillable: true, rates, currency: 'USD' };

}, {

tags: ['integrations', 'shipping', 'policy'],

description: 'Check fulfillment viability and return shipping options',

});

agent.serve();

// → POST /skills/check_stock — auto-generated REST endpoint with input validation

// → POST /skills/fulfillableQuote — discoverable by any agent in the fleet

```

### Go

```go

// The Go SDK does not have a separate skill registration method.

// Use RegisterReasoner for all functions, whether AI-powered or deterministic.

// This registers /reasoners/check_stock, not /skills/check_stock.

a.RegisterReasoner("check_stock", func(ctx context.Context, input map[string]any) (any, error) {

sku, _ := input["sku"].(string)

warehouse, _ := input["warehouse"].(string)

if warehouse == "" {

warehouse = "us-east"

}

row, err := db.QueryRow(ctx, "SELECT qty, reserved FROM stock WHERE sku = $1 AND warehouse = $2", sku, warehouse)

if err != nil {

return map[string]any{"sku": sku, "available": 0, "status": "not_found"}, nil

}

available := row.Qty - row.Reserved

status := "out_of_stock"

if available > 0 {

status = "in_stock"

}

return map[string]any{"sku": sku, "available": available, "status": status}, nil

},

agent.WithDescription("Query real-time inventory by SKU and warehouse"),

agent.WithReasonerTags("database", "inventory"),

)

```

---

**What just happened**

- Skills handled deterministic state and policy logic without any model call

- The second skill composed the first skill into a production-shaped workflow

- Both skills inherited the same endpoint generation, discoverability, and execution tracking as reasoners

- In Go, the same deterministic pattern is registered with `RegisterReasoner` and exposed under `/reasoners/{name}` instead of `/skills/{name}`

Example proof:

```text

Python/TypeScript:

POST /skills/check_stock

POST /skills/fulfillable_quote

discoverable target: inventory-service.fulfillable_quote

Go:

POST /reasoners/check_stock

```

### Skills vs Reasoners

| Aspect | Reasoner | Skill |

|--------|----------|-------|

| **Purpose** | AI-powered inference and generation | Deterministic business logic |

| **LLM calls** | Typically uses `app.ai()` or `app.harness()` | Typically no LLM calls |

| **Output** | May vary across runs | Same input always produces same output |

| **Use cases** | Classification, generation, analysis | API calls, database ops, calculations, formatting |

| **Endpoint prefix** | `/reasoners/{name}` | `/skills/{name}` |

Both share the same execution infrastructure: workflow tracking, execution context, verifiable credentials, and cross-agent communication.

### Patterns

### Database integration skill

Skills are ideal for wrapping database queries with validation and consistent return shapes:

### Python

```python

from pydantic import BaseModel

from typing import Optional

class UserQuery(BaseModel):

user_id: str

fields: list[str] = ["name", "email"]

@app.skill(tags=["database", "users"])

async def get_user(query: UserQuery) -> dict:

# Skills can be async for I/O operations

user = await db.users.find_one({"_id": query.user_id})

if not user:

return {"error": "User not found", "user_id": query.user_id}

return {k: user.get(k) for k in query.fields if k in user}

```

### TypeScript

```typescript

agent.skill('get_user', async (ctx) => {

const { user_id, fields = ['name', 'email'] } = ctx.input;

const user = await db.users.findOne({ _id: user_id });

if (!user) {

return { error: 'User not found', user_id };

}