The AI Agent Accountability Gap

Last month, a returns agent at an e-commerce company approved a $12,000 refund it shouldn't have, and the strange thing was that nothing looked wrong. The logs showed no errors, the model returned a valid response, every validation check passed. The problem wasn't that the agent failed, it's that no one could explain why it made that call in the first place. This is becoming the new failure mode for AI systems: not crashes, not exceptions, but decisions that technically work and yet can't be justified to anyone after the fact.

Every serious software company is eventually going to run two backends, and I think most people building in this space haven't fully internalized what that means. The first backend is the one we all know, where the request comes in, code runs, response goes out. We've spent fifty years building tooling for this: compilers, databases, observability, IAM, all built around a single assumption that humans make decisions, encode them in code, and machines execute them exactly as instructed.

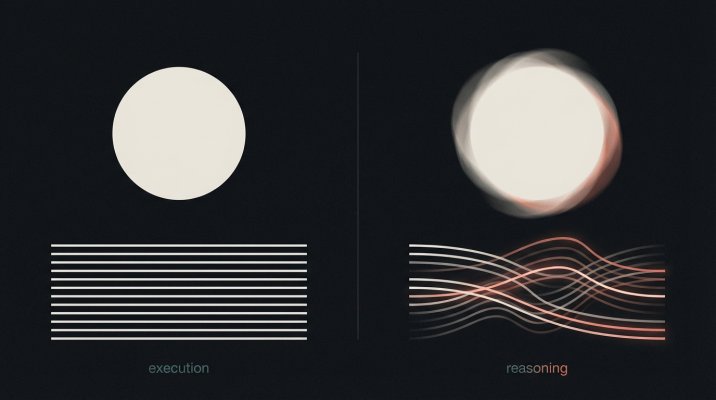

Two parallel backends: deterministic execution and stochastic reasoning

The second backend handles reasoning, and that's where everything we've built starts to break down. It doesn't follow instructions in the traditional sense rather it makes judgment calls. Same input can produce different output depending on context, and there's no stack trace that explains why it picked one path over another because the reasoning itself is the product, not just a means to an output.

We have everything we need for the first backend. The second? We're still figuring out the foundations.

Authority Without Accountability

When you hand off a decision to a person, authority and accountability travel together—you can't really use one without bearing the other. This has been true as long as organizations have existed, and most of our management structures, legal frameworks, and audit practices are built on this assumption without even thinking about it.

AI breaks this arrangement in a way that I don't think we've fully grappled with yet. These agents can approve loans, route support tickets, authorize refunds, flag transactions, they process more calls in an hour than a human team handles in a month. But they can't be held responsible in any meaningful sense, because responsibility requires something they don't have: understanding, intention, the capacity to have chosen otherwise.

I've seen teams build agents that perform convincingly in demos and then degrade in production, not because the agents are flawed, but because the surrounding systems were never designed to surface when judgment breaks down. It's because there's no tooling to surface when confidence is low or authority is unclear, especially when scale starts to kick in. The logs show green, the metrics look fine, and somewhere a call was made that no one can reconstruct after the fact.

This is the structural problem we're dealing with: we're building agents that make judgment calls without being able to answer for those calls. Authority gets passed down but accountability stays behind, distributed across the humans who trained, deployed, and supervised the system. Better alignment won't fix this because it's baked into how the technology works, AI can act autonomously, but it can't be responsible in the way we need actors to be responsible.

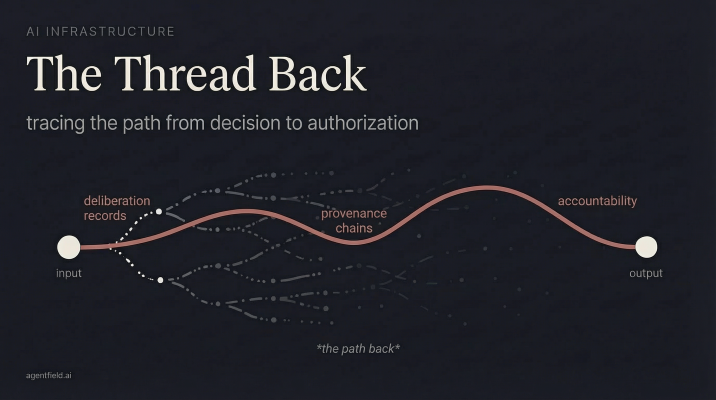

What we actually need are chains of provenance that trace every decision back to the humans who authorized it, audit records that capture not just what was decided but the reasoning that led there, and identity systems that make handoffs explicit and verifiable. In practice, that means cryptographic proof that an agent was acting under a specific grant of human authority, scoped to a defined class of decisions and bounded by clear limits.

These requirements didn't emerge from theory; they surfaced repeatedly while trying to move agents from demonstrations into real operational backends, where decisions carry consequences and ownership has to be unambiguous.

The Deliberation Record

When a doctor makes a diagnosis, the medical record does not simply note the treatment. It captures the reasoning behind it: which symptoms pointed in which direction, which tests ruled out alternatives, and why one course of action was chosen over another. The record exists to preserve the thinking, not just the outcome.

Software has never required this kind of record. Code executes deterministically. If something goes wrong, you inspect the logs, and the logs are sufficient because the system did exactly what it was instructed to do. There is no meaningful gap between instruction and action that needs to be reconstructed after the fact.

AI systems behave differently. A model evaluates possibilities, weighs competing signals, and converges on a response. Unless the system is explicitly designed to retain that process, the reasoning disappears the moment the output is produced. What remains is a decision severed from the path that led to it, closer to a medical chart that records a prescription without any indication of how the diagnosis was reached.

Production AI will require something closer to a deliberation record: a structured account of what was considered, how different factors were weighed, and why a particular judgment prevailed. Unlike traditional logs, which record a linear sequence of events, deliberation unfolds across interacting signals that reinforce, counterbalance, and sometimes override one another. The severity of an issue may push toward escalation while a customer's history pulls in the opposite direction, with operational constraints exerting their own pressure on the outcome. These considerations do not resolve themselves in a tidy sequence, rather they coexist and compete, and the final decision emerges from that tension rather than from any single step in isolation.

This marks a fundamental shift in how systems need to be understood. Traditional software executes actions, and our observability tooling has been built accordingly. AI systems, by contrast, operate through judgment, weighing competing considerations before arriving at an outcome. In that context, knowing what a model returned is insufficient; understanding what it evaluated and how those factors influenced the result becomes essential to understanding the system itself.

Confidence as Product

Type systems, assertions, unit tests, every tool we've built in the last fifty years exists to guarantee certainty. If the output is ambiguous, something went wrong and you need to fix it.

AI inverts this completely. A model that says "80% chance of fraud" is actually more useful than one that says "fraud" or "not fraud" because the uncertainty is the signal. Suppress it and you've thrown away information; force a binary classification and you've hidden the cases where the model was basically guessing.

This requires different architecture than what we're used to building. Instead of code that fails when confidence is low, you need code that routes on confidence - high-confidence calls go straight through while low-confidence ones get more reasoning, different models, or human review. Confidence becomes a resource you budget, like compute or memory.

We don't have good primitives for this yet, which is part of why I'm working on it. HTTP status codes don't include "206 Partially Confident." Error handling is still binary: success or exception. The tooling assumes that if it didn't throw, it worked, and for AI backends that assumption produces agents that return wrong answers confidently with no indication that anything's off.

What matters in practice is not traditional service health, but calibration, the degree to which a model's expressed confidence corresponds to the actual reliability of its judgments.

What This Looks Like in Practice

The old way of handling something like returns was hardcoded rules: return request under $50 and within 30 days, auto-approve; customer has more than two returns this month, flag for review. Clear, deterministic, auditable. Also brittle, every edge case needs a new rule, and the rules start interacting in ways nobody fully tracks.

The new way is that the agent evaluates context: order history, customer lifetime value, the nature of the complaint, similar cases, current inventory levels. It reaches a judgment.

The moment it does, questions emerge that current tooling can't answer. How confident was the agent? It approved the return, but was it 95% sure or 55% sure? Did it consider escalating to a human and decide against it? A 55%-confident approval that turns out fraudulent is a different failure than a 95%-confident one, the former suggests broken routing, the latter suggests broken reasoning, and you need to know which.

Who authorized it to decide? The agent made the call, but whose authority was it using? Which human set the rules? When someone set the $50 threshold, did they anticipate this kind of context-driven reasoning being applied on top of it? The chain from human authorization to agent action needs to be traceable.

What factors did it weigh? The customer complained about a defective product, and the agent saw three similar complaints this week. Did it factor that in? Should it have? If a pattern emerges that the product is actually defective, will anyone notice the agents were routing around the problem rather than escalating it?

Right now there's no chain of provenance, no deliberation records, no way to route on confidence. Just an outcome with no trail back to the thinking that produced it.

The Tooling I'm Building

Not every decision needs this level of tooling, a chatbot that suggests products can fail without catastrophe. But the moment an agent approves a refund, authorizes access, or routes a complaint, you've entered territory where accountability actually matters.

Tracing back the reasoning chain when thousands of autonomous systems collaborate on business goals

The agent frameworks that exist today are excellent for building agents, they're Flask for AI, and you can stand up a working prototype in an afternoon. But Flask doesn't run your payment systems. Production needs something else: identity, orchestration, observability, governance. Tooling that turns single-service experiments into reliable distributed setups.

AI backends need the same evolution we saw with web services. When an agent makes a call five hops down a chain of command, you need to trace the authority back to the human who granted it. When reasoning fails, you need to know what was considered, not just that an error occurred, but which factors were weighted, which alternatives rejected, where the judgment went wrong. When confidence drops below threshold, you need code that escalates rather than guesses.

I think of it as the reasoning layer: a parallel tech stack for a different kind of operation, sitting alongside your services, handling the calls that used to be hardcoded, with the accountability tooling that judgment requires.

What Comes Next

Companies are going to automate judgment, every company with more calls than people to make them will eventually reach this conclusion because the economics are too compelling and the technology has crossed the threshold of usefulness.

Most teams are deploying without, building on frameworks designed for prototyping and running in production environments built for deterministic code. Some of those gaps won't matter. Others will surface as audit failures, unexplainable calls, accountability vacuums when something goes wrong and nobody can figure out why.

You could argue the foundation should come first, that we should solve accountability before scaling deployment. But companies won't wait, the economics of automated judgment are too compelling, and the technology is ready enough to ship. So we're building the primitives now: deliberation records, provenance chains, confidence routing, identity tooling that makes handoffs explicit. Racing to close the gap before it becomes catastrophic.

The agents that make judgment calls need tooling that can account for them. That's the work.